Linear Regression

A Brief Review

University of Cincinnati

About me

Ames housing data

- Data describing the sale of individual residential property in Ames, Iowa from 2006 to 2010

- There are 2930 observations on 81 variables involved in assessing home values:

- 23 nominal

- 23 ordinal

- 14 discrete

- 20 continuous

- Paper: https://jse.amstat.org/v19n3/decock.pdf

Ames housing data

Ames housing data

We’ll focus on a handful of variables:

Sale_Price- Sale price of the house / $10K (response variable)Gr_Liv_Area- Above grade (ground) living area square feetOverall_Qual- Rates the overall material and finish of the house

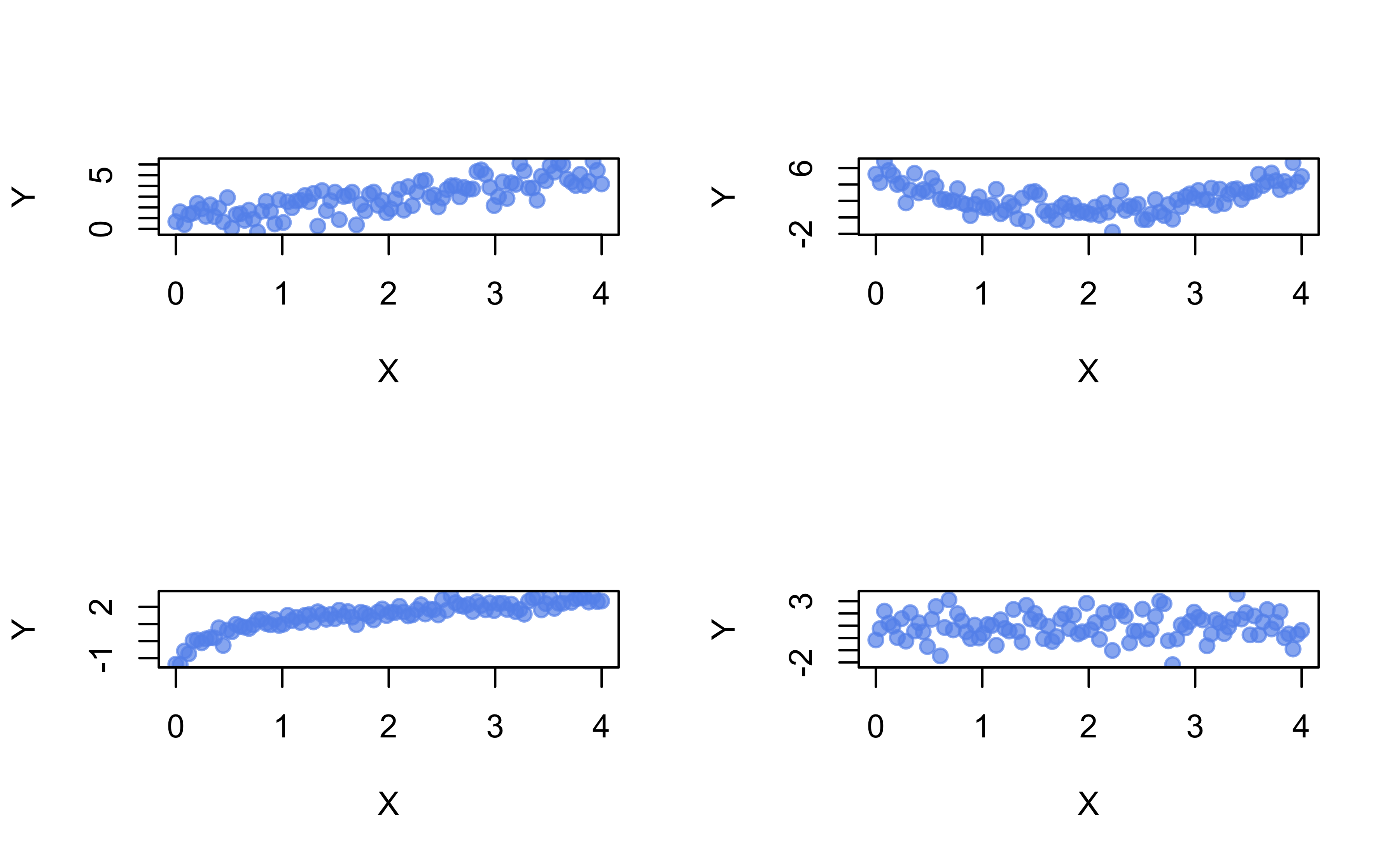

Statistical relationships

Show R code

# Simulate data from different SLR models

set.seed(101) # for reproducibility

x <- seq(from = 0, to = 4, length = 100)

y <- cbind(

1 + x + rnorm(length(x)), # linear

1 + (x - 2)^2 + rnorm(length(x)), # quadratic

1 + log(x + 0.1) + rnorm(length(x), sd = 0.3), # logarithmic

1 + rnorm(length(x)) # no association

)

# Scatterplot of X vs. each Y in a 2-by-2 grid

par(mfrow = c(2, 2))

for (i in 1:4) {

plot(x, y[, i], col = adjustcolor("cornflowerblue", alpha.f = 0.7),

pch = 19, xlab = "X", ylab = "Y")

}

Are \(X\) and \(Y\) correlated?

Pearson’s correlation coefficient

- The (Pearson) correlation between two random variables \(X\) and \(Y\) is given by

\[Cor\left(X, Y\right) = \rho = \frac{Cov\left(X,Y\right)}{\sigma_X\sigma_Y}\]

- Given a sample of \(n\) pairs \(\left\{\left(x_i, y_i\right)\right\}_{i=1}^n\), we estimate \(\rho\) with \(r = S_{xy} / \sqrt{S_{xx}S_{yy}}\), where, for example, \[S_{xx} = \sum_{i=1}^n\left(x_i - \bar{x}\right)^2 \text{ and } S_{xy} = \sum_{i=1}^n\left(x_i - \bar{x}\right)\left(y_i - \bar{y}\right)\]

Pearson’s correlation coefficient

Range: \(-1 \le r \le 1\)

What does it measure?

- Pearson’s correlation coefficient is a unitless measure of the strength of the linear relationship between two variables

Other useful correlation measures also exist:

Spearman’s rank correlation (or Spearman’s \(\rho\)) only assumes a monotonic relationship between \(X\) and \(Y\)

- Equivalent to computing \(r\) on the ranks of \(X\) and \(Y\)

Pearson’s correlation coefficient

It is common to test the hypothesis \(H_0: \rho = 0\) vs. \(H_1: \rho \ne 0\)

Rejecting \(H_0\) is only evidence that \(\rho\) is not exactly zero (NOT VERY USEFUL, OR INTERESTING)

A \(p\)-value does not measure the magnitude/strength of the (linear) association

Sample size affects the \(p\)-value! 😱

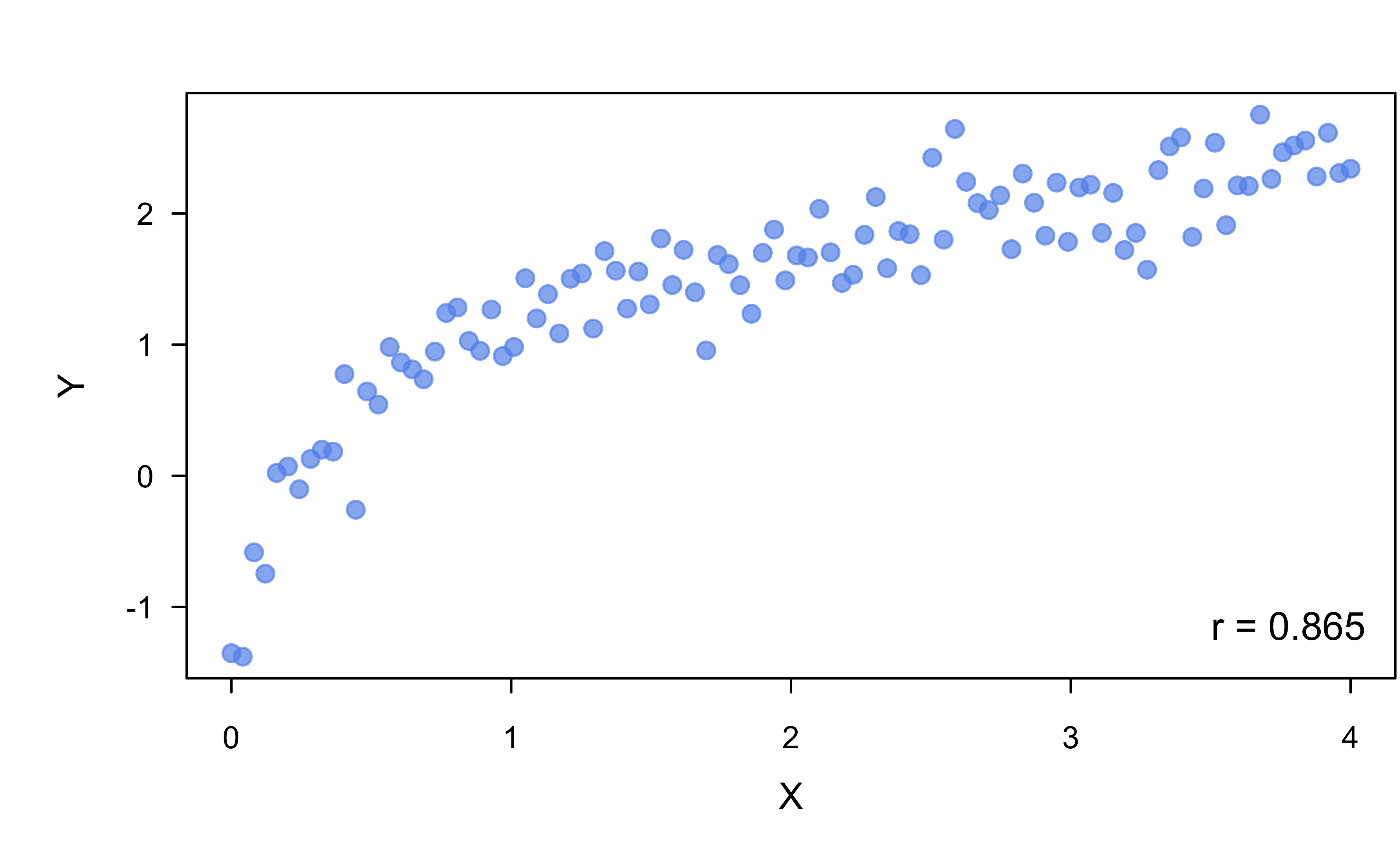

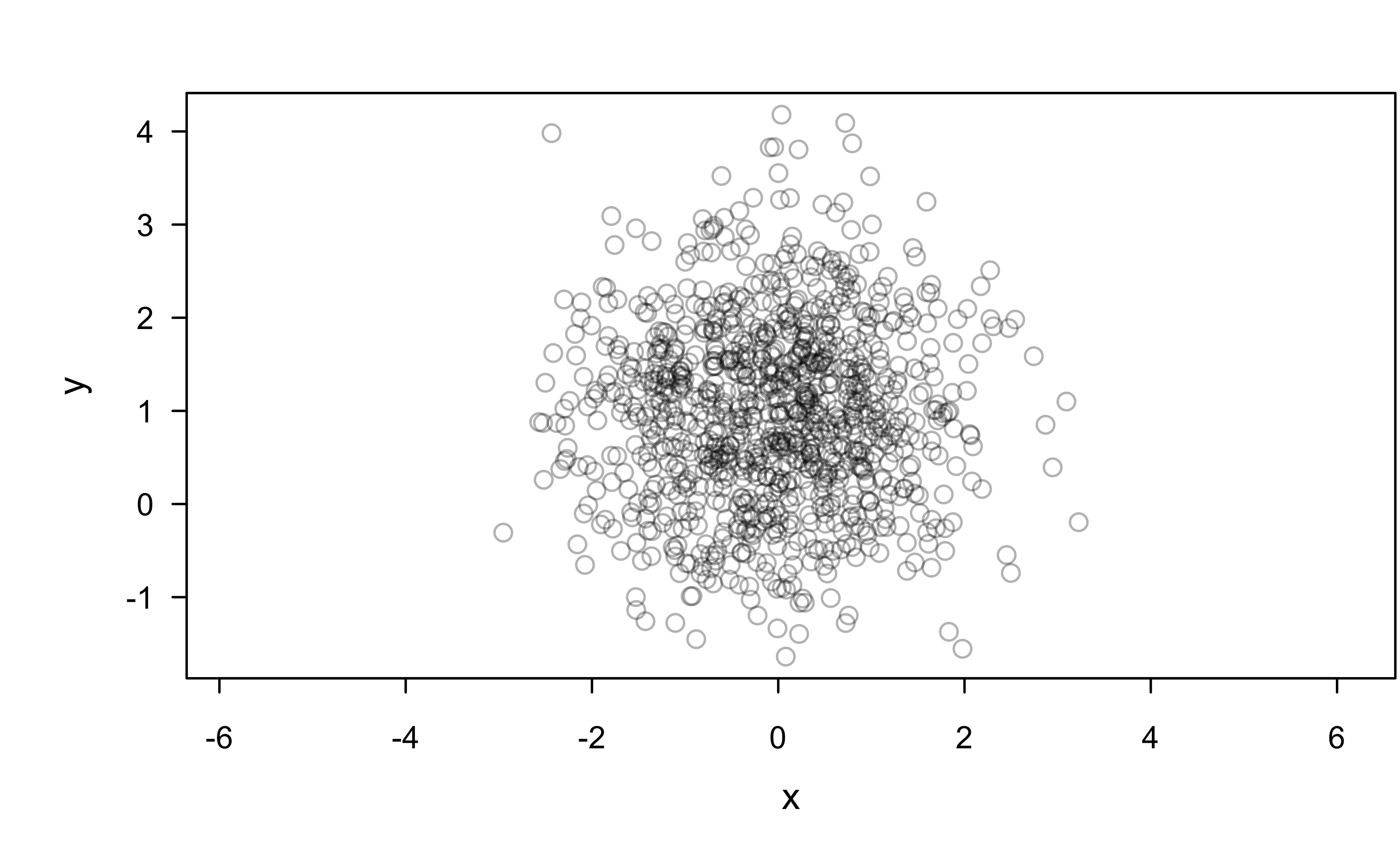

Are \(x\) and \(y\) correlated?

Pearson’s correlation coefficient

Show R code

Pearson's product-moment correlation

data: x and y

t = -0.0028012, df = 98, p-value = 0.9978

alternative hypothesis: true correlation is not equal to 0

95 percent confidence interval:

-0.1966901 0.1961461

sample estimates:

cor

-0.0002829617 Show R code

Pearson's product-moment correlation

data: x and y

t = 3.731, df = 9999998, p-value = 0.0001907

alternative hypothesis: true correlation is not equal to 0

95 percent confidence interval:

0.0005600528 0.0017996412

sample estimates:

cor

0.001179847 The real question is, are \(X\) and \(Y\) practically uncorrelated?

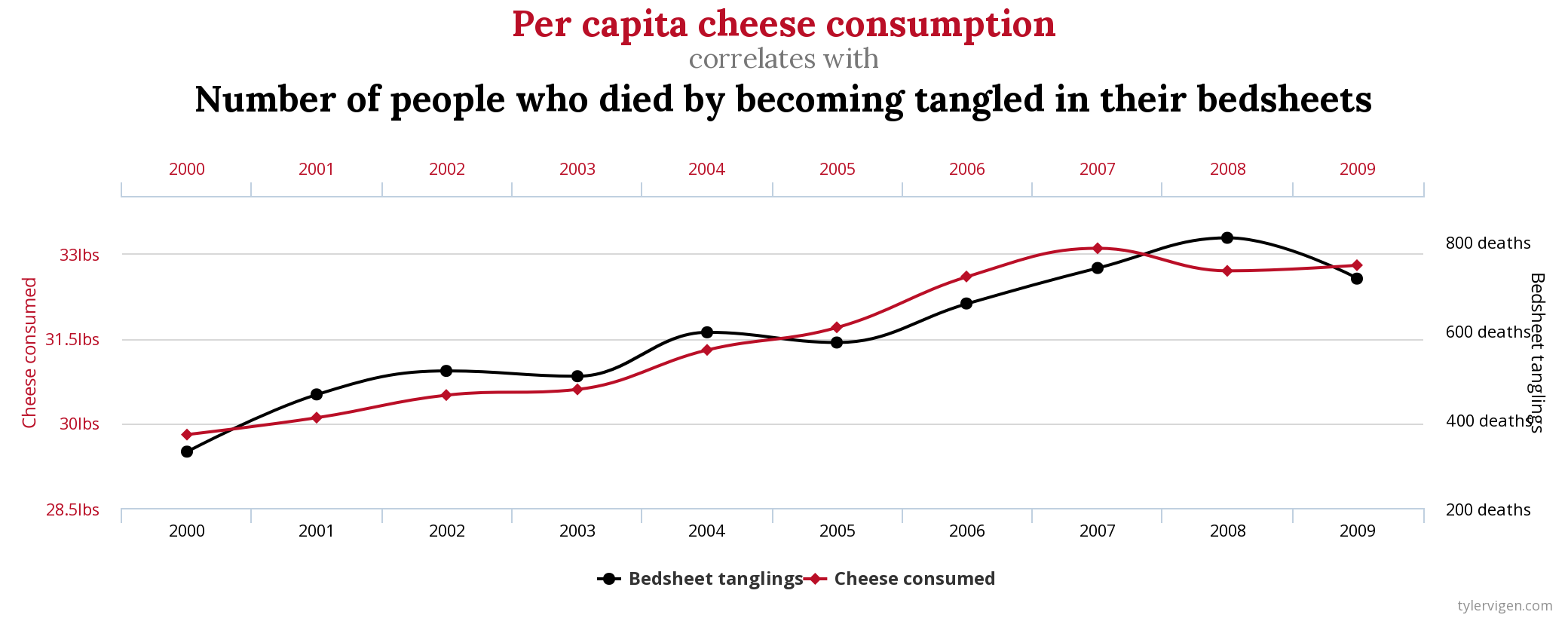

Correlation is not causation

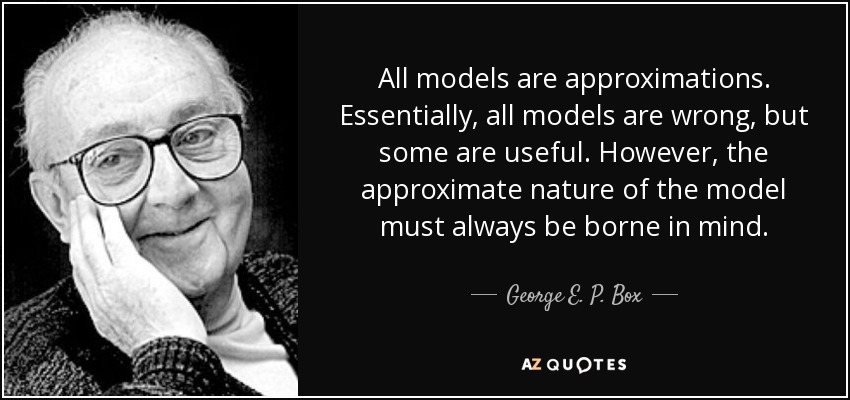

All models are wrong!

Also, see this talk by my old adviser, Thad Tarpey: “All Models are Right… most are useless.”

Simple Linear Regression (SLR)

Pearson’s correlation vs. SLR

There’s a formal relationship between Pearson’s correlation coefficient (\(\rho\)) and the SLR model

“Simple” linear relationships can be described by an intercept and slope:

- \(y = mx + b\) (algebra)

- \(\mu = \beta_0 + \beta_1x\) (statistics)

“Simple” here means two variables, \(x\) and \(y\) (but \(y\) can be linearly related to several variables)

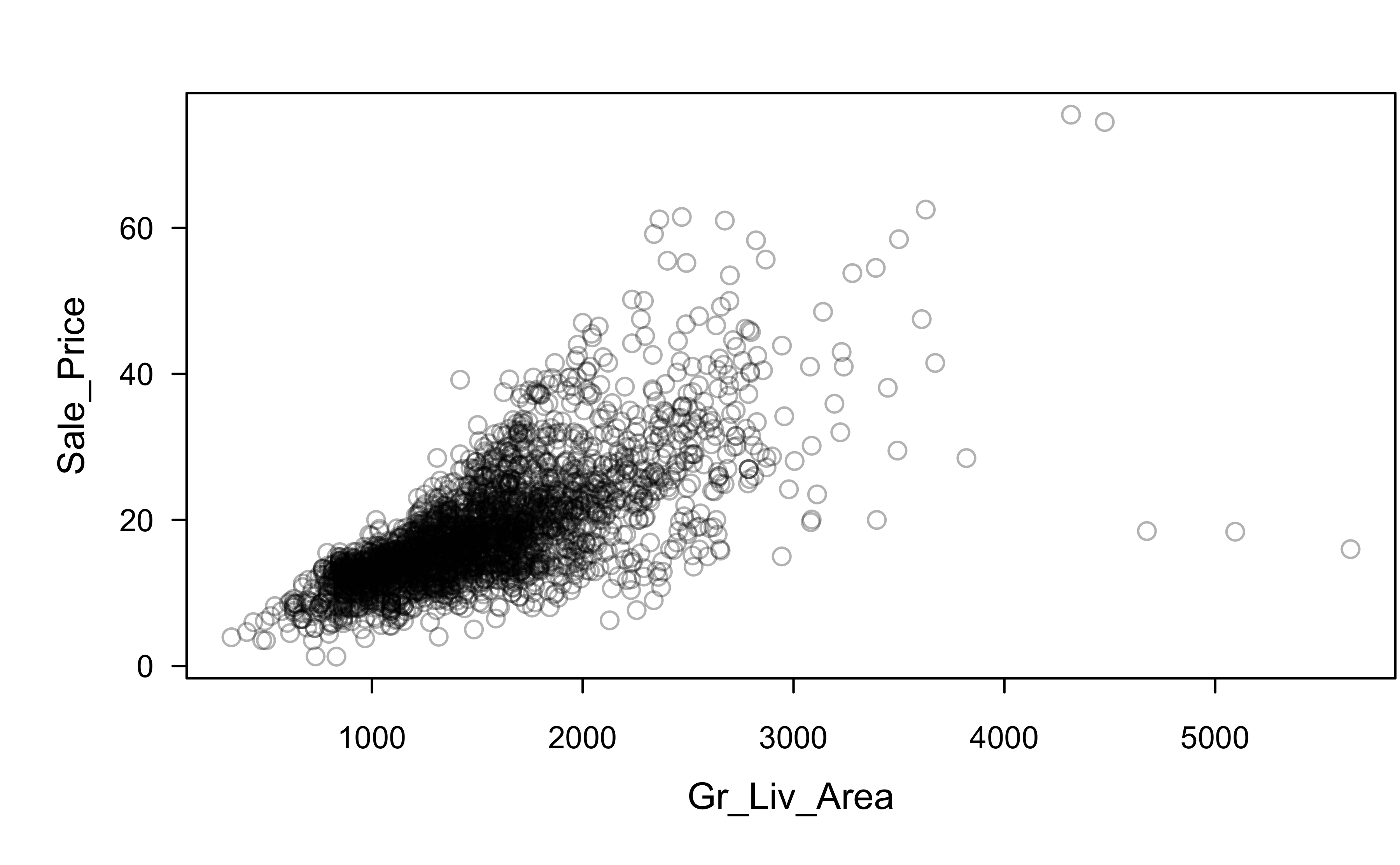

Example: Ames housing

Check out this paper for useful background on the Ames housing data in regression

Example: Ames housing

[,1] [,2]

[1,] 21.50 1656

[2,] 10.50 896

[3,] 17.20 1329

[4,] 24.40 2110

[5,] 18.99 1629

[6,] 19.55 1604

Pearson's product-moment correlation

data: ames$Sale_Price and ames$Gr_Liv_Area

t = 54.061, df = 2928, p-value < 2.2e-16

alternative hypothesis: true correlation is not equal to 0

95 percent confidence interval:

0.6881814 0.7244502

sample estimates:

cor

0.7067799 This doesn’t tell us much about the nature of the linear relationship between Gr_Liv_Area and Sale_Price

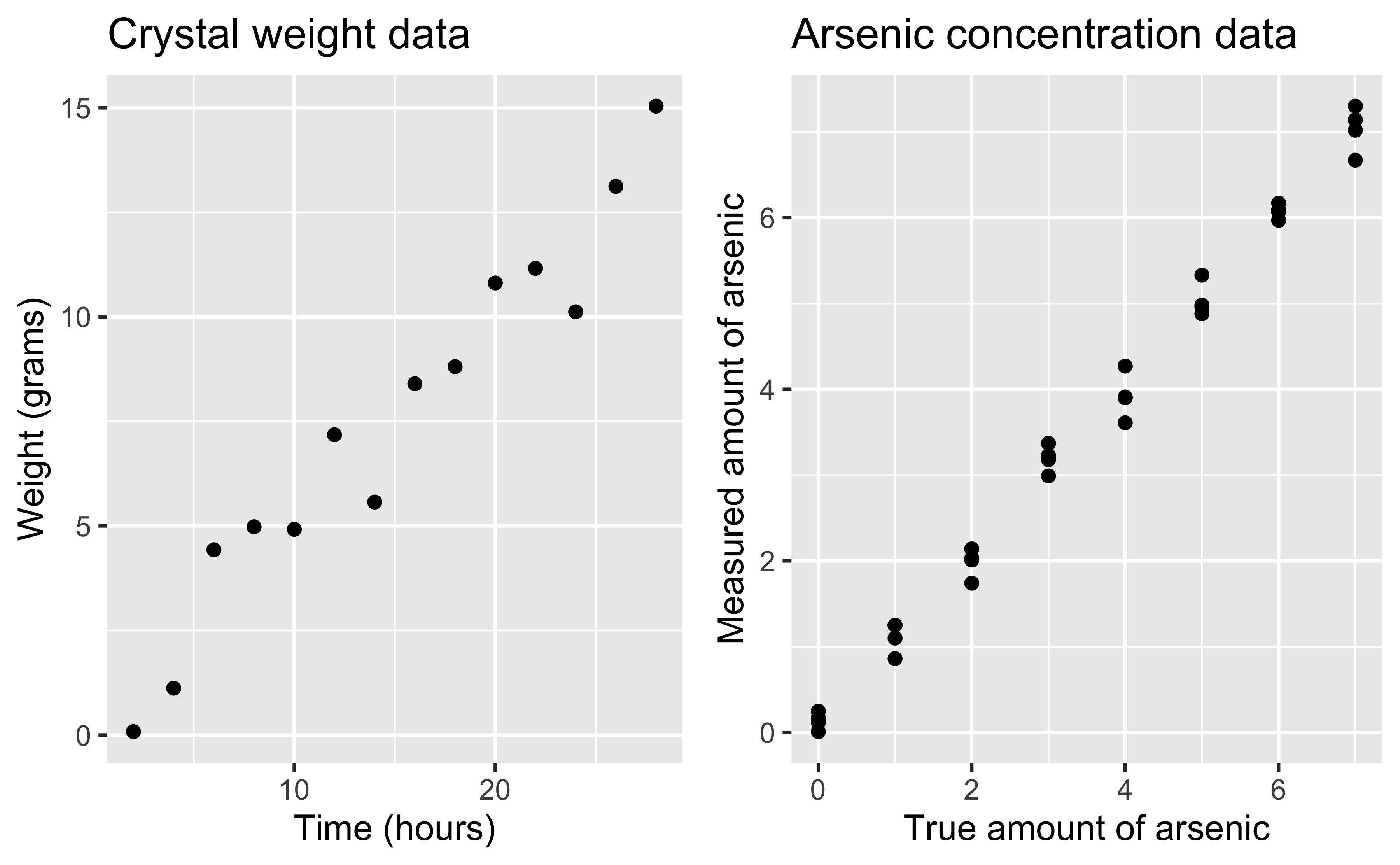

Statistical relationships

Show R code

library(ggplot2)

p1 <- ggplot(investr::crystal, aes(x = time, y = weight)) +

geom_point() +

labs(x = "Time (hours)",

y = "Weight (grams)",

title = "Crystal weight data")

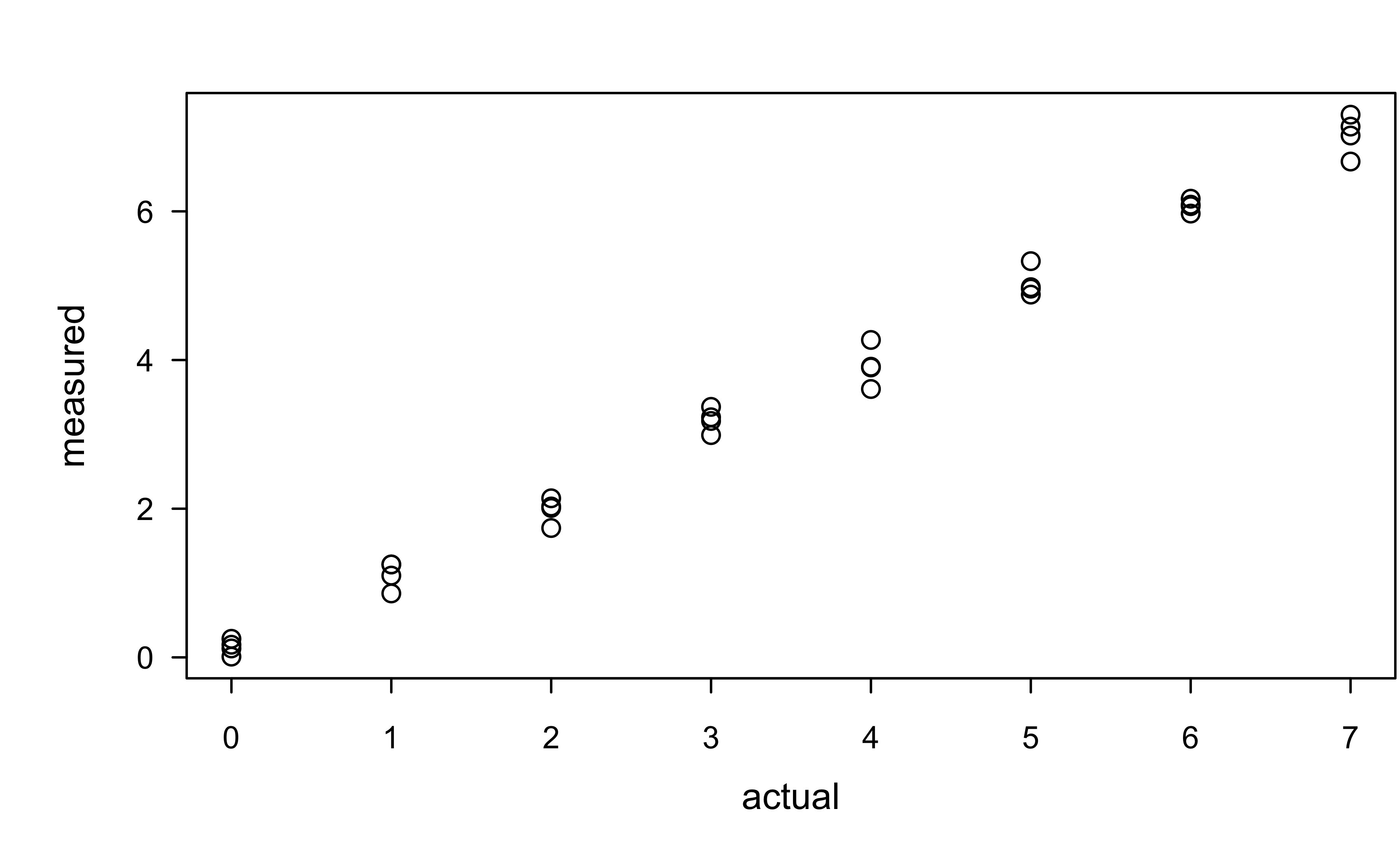

p2 <- ggplot(investr::arsenic, aes(x = actual, y = measured)) +

geom_point() +

labs(x = "True amount of arsenic",

y = "Measured amount of arsenic",

title = "Arsenic concentration data")

gridExtra::grid.arrange(p1, p2, nrow = 1)

Examples of statistical relationships

Simple linear regression: \(Y = \beta_0 + \beta_1 X + \epsilon\)

Multiple linear regression: \(Y = \beta_0 + \sum_{i=1}^p \beta_p X_p + \epsilon\)

Polynomial regression: \(Y = \beta_0 + \sum_{i=1}^p \beta_p X^p + \epsilon\)

Logarithmic: \(Y = \beta_0 + \beta_1 \log\left(X + 0.1\right) + \epsilon\)

Nonlinear regression: \(Y = \frac{\beta_1 X}{\left(\beta_2 + X\right)} + \epsilon\)

Multiplicative: \(Y = \beta X \epsilon\)

- \(\log\left(Y\right) = \alpha + \log\left(X\right) + \log\left(\epsilon\right)\)

Assuming \(\epsilon \sim D\left(\mu, \sigma\right)\)

Simple linear regression (SLR)

Data: \(\left\{\left(X_i, Y_i\right)\right\}_{i=1}^n\)

Model: \(Y_i = \beta_0 + \beta_1 X_i + \epsilon_i\)

\(Y_i\) is a continuous response

\(X_i\) is a continuous predictor

\(\beta_0\) is the intercept of the regression line (also called the bias term)

\(\beta_1\) is the slope of the regression line

\(\epsilon_i \stackrel{iid}{\sim} D\left(0, \sigma^2\right)\)

Assumptions about the errors \(\epsilon_i\)

For \(i\) and \(j\) in \(\left\{1, 2, \dots, n\right\}\) and \(i \ne j\)

\(\quad E\left(\epsilon_i\right) = 0\)

\(\quad Var\left(\epsilon_i\right) = \sigma^2\) (homoscedacticity 😱)

\(\quad Cov\left(\epsilon_i, \epsilon_j\right) = 0\) (independence)

Assumptions 1–3 can be summarized as \(\epsilon_i \stackrel{iid}{\sim} D\left(0, \sigma^2\right)\), where \(iid\) refers to independent and identically distributed.

Properties of SLR

Simple linear regression: \(Y_i = \beta_0 + \beta_1 X_i + \epsilon_i\)

- Assumes the model is linear in the regression coefficients \(\beta_0\) and \(\beta_1\)

The error term is a random variable; hence, \(Y_i\) is also a random variable (Why? 🤔)

- What is \(E\left(Y_i|X_i\right)\) and \(Var\left(Y_i|X_i\right)\)?

\(Cor\left(Y_i, Y_j\right) = 0\) \(\forall i \ne j\) (Why? 🤔)

Inference for a single variable

- Is it useful to test the hypothesis that

Sale_Price= $160K?

- No! Because

Sale_Priceis not a constant, but a random variable whose value varies from home to home (and year to year, etc.)

We’re more interested in questions such as:

- What is the chance that

Sale_Price> $160K? (above median sale price) - What is the chance that

Sale_Price< $105K? (lowest decile) - What is the chance that $129,500 <

Sale_Price< $213,500? (within IQR)

- What is the chance that

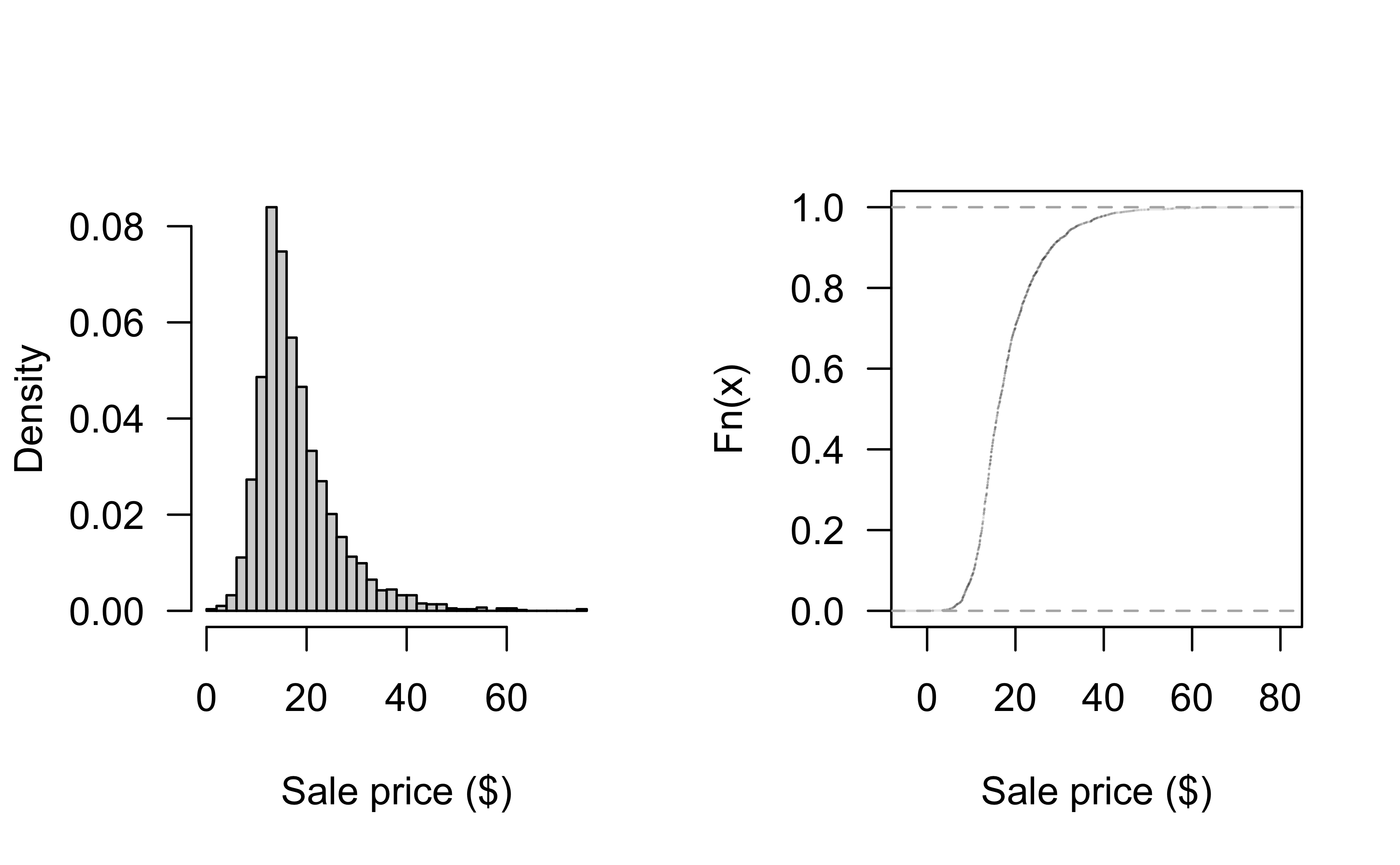

Distribution of Sale_Price

Can look at historgram and empirical CDF:

Distribution of Sale_Price

- Histograms and ECDFs are nonparammetric in nature

- A simple parametric approach might assume a particular distribution for

Sale_Price - For instance, we might assume

Sale_Price\(\sim N\left(\mu, \sigma^2\right)\) - How can we estimate \(\mu\) and \(\sigma^2\)?

- Is the normal distribution a reasonable assumption here?

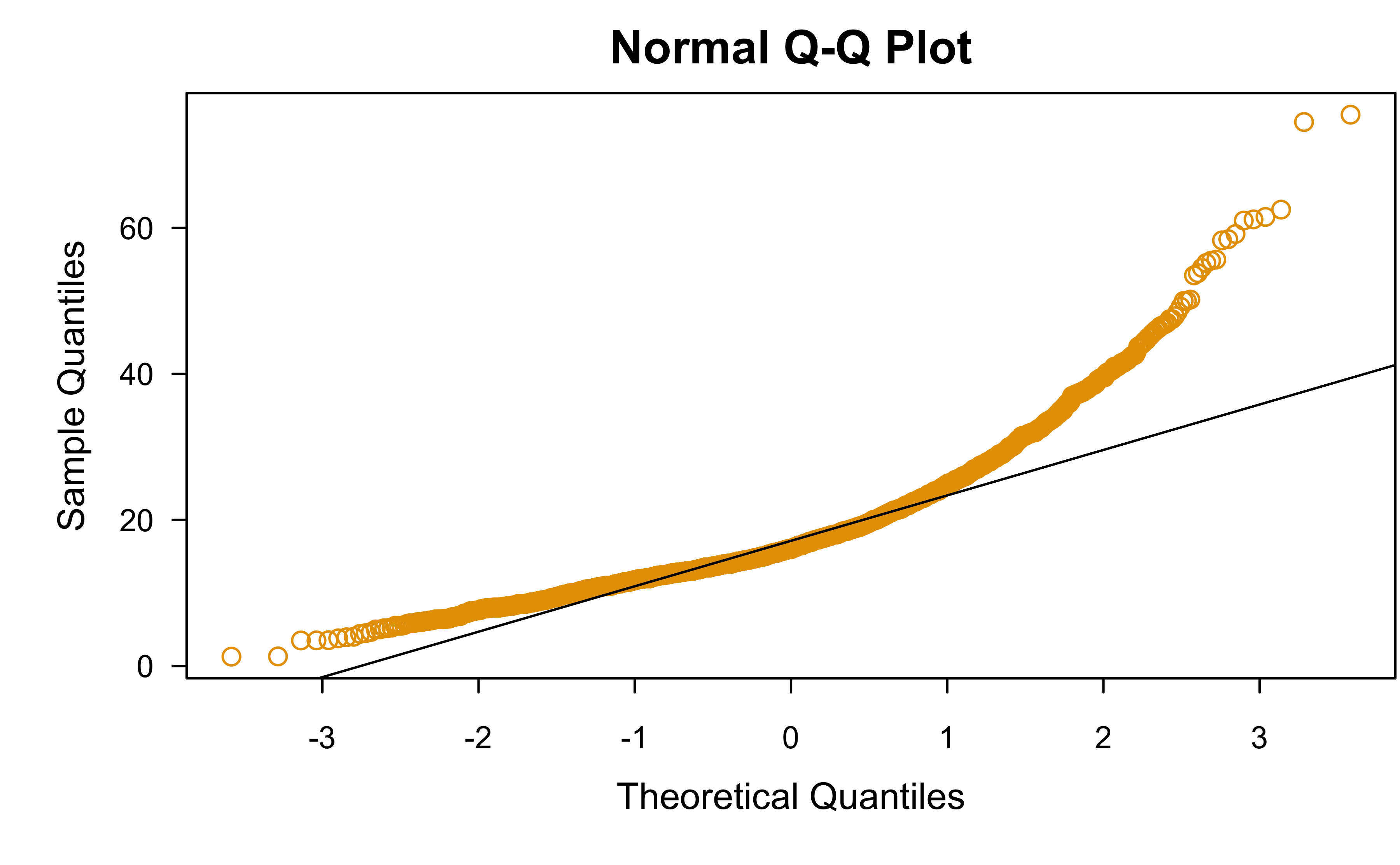

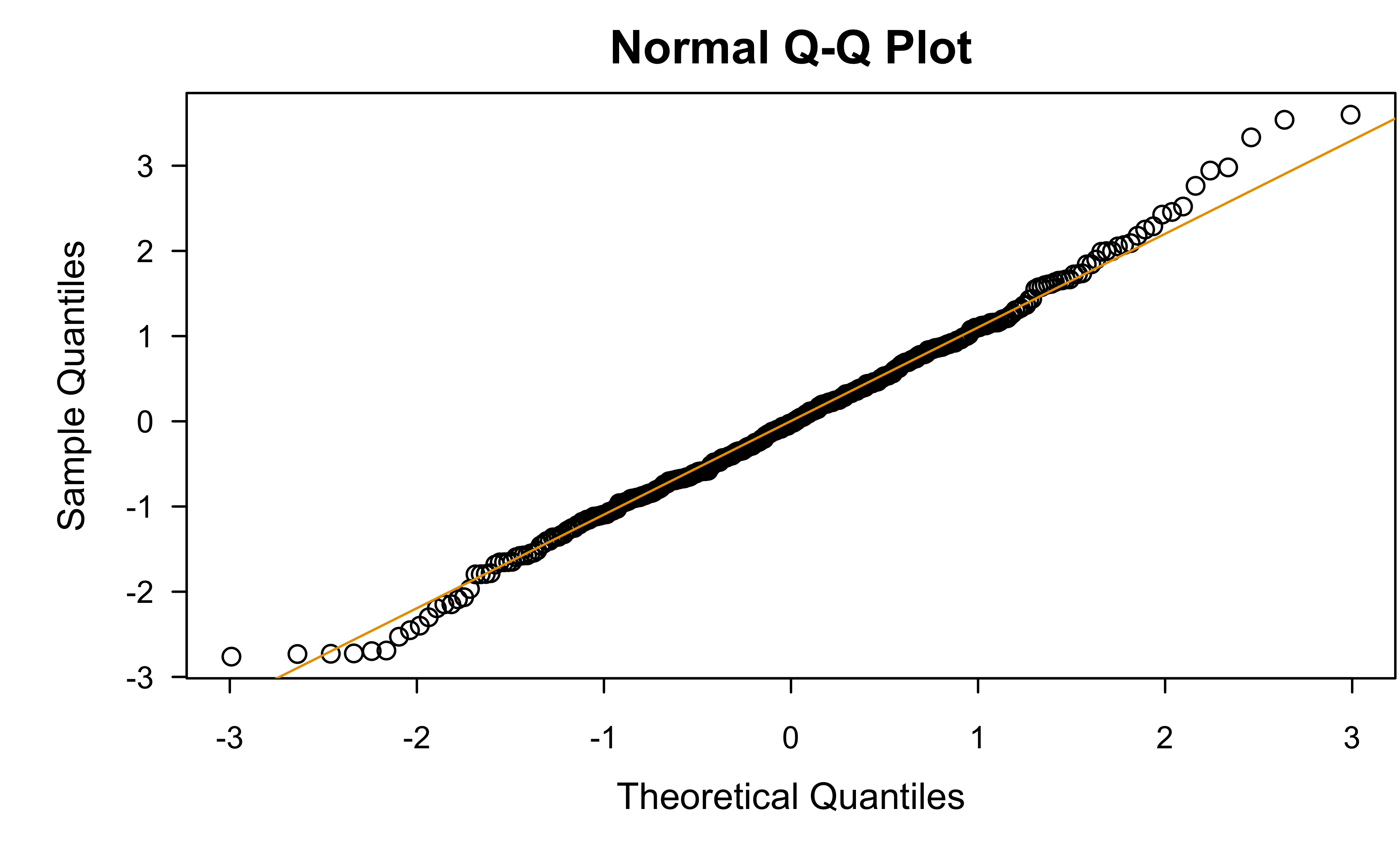

Normal QQ plot

Normal quantile-quantile (Q-Q) plot* can be used to asses the “normalityness” of a set of observations

Q-Q plots can, in general, be used to compare data with any distribution!

Normality tests 🤮

Normality tests, like the Shapiro-Wilk1 and Anderson-Darling tests, can also be used to assess normality

- I STRONGLY ADVISE AGAINST USING THEM!

No data is normally distributes, what we care about is whether enough a normal approximation is close enough!

Normality tests provide a \(p\)-value, which only gives a yes/no conclusion

Normality tests 🤮

Recall that \(p\)-values are a function of sample size!

Show R code

# Shapiro-Wilk test results vs. sample size

set.seed(101) # for reproducibility

x <- replicate(100, c(

shapiro.test(rt(10, df = 40))$p.value,

shapiro.test(rt(100, df = 40))$p.value,

shapiro.test(rt(500, df = 40))$p.value,

shapiro.test(rt(1000, df = 40))$p.value,

shapiro.test(rt(2500, df = 40))$p.value,

shapiro.test(rt(5000, df = 40))$p.value

))

rownames(x) <- paste0("n=", c(10, 100, 500, 1000, 2500, 5000))

rowMeans(x < 0.05) n=10 n=100 n=500 n=1000 n=2500 n=5000

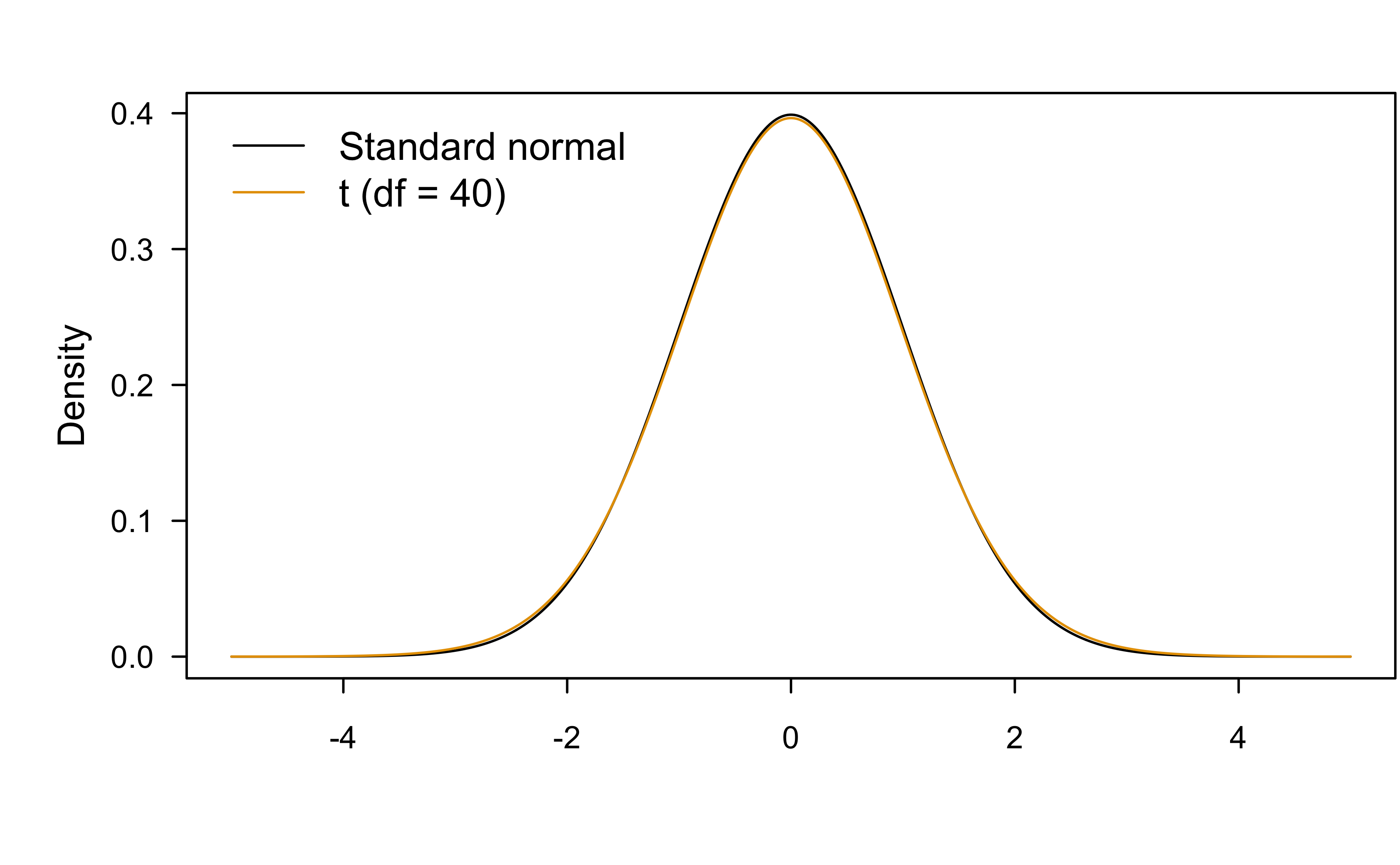

0.01 0.08 0.11 0.22 0.24 0.39 Normality tests 🤮

Are these two distributions significantly different?

Are these two distributions practically different?

What can we do if the normality assumption isn’t justified?

Try transformations

- Logarithm or square root for positive data

- Power transformation (like the well-known Box-Cox procedure)

Try a more appropriate distribution (e.g., Poisson or gamma distribution)

Try more advanced approaches, like the nonparametric bootstrapping!

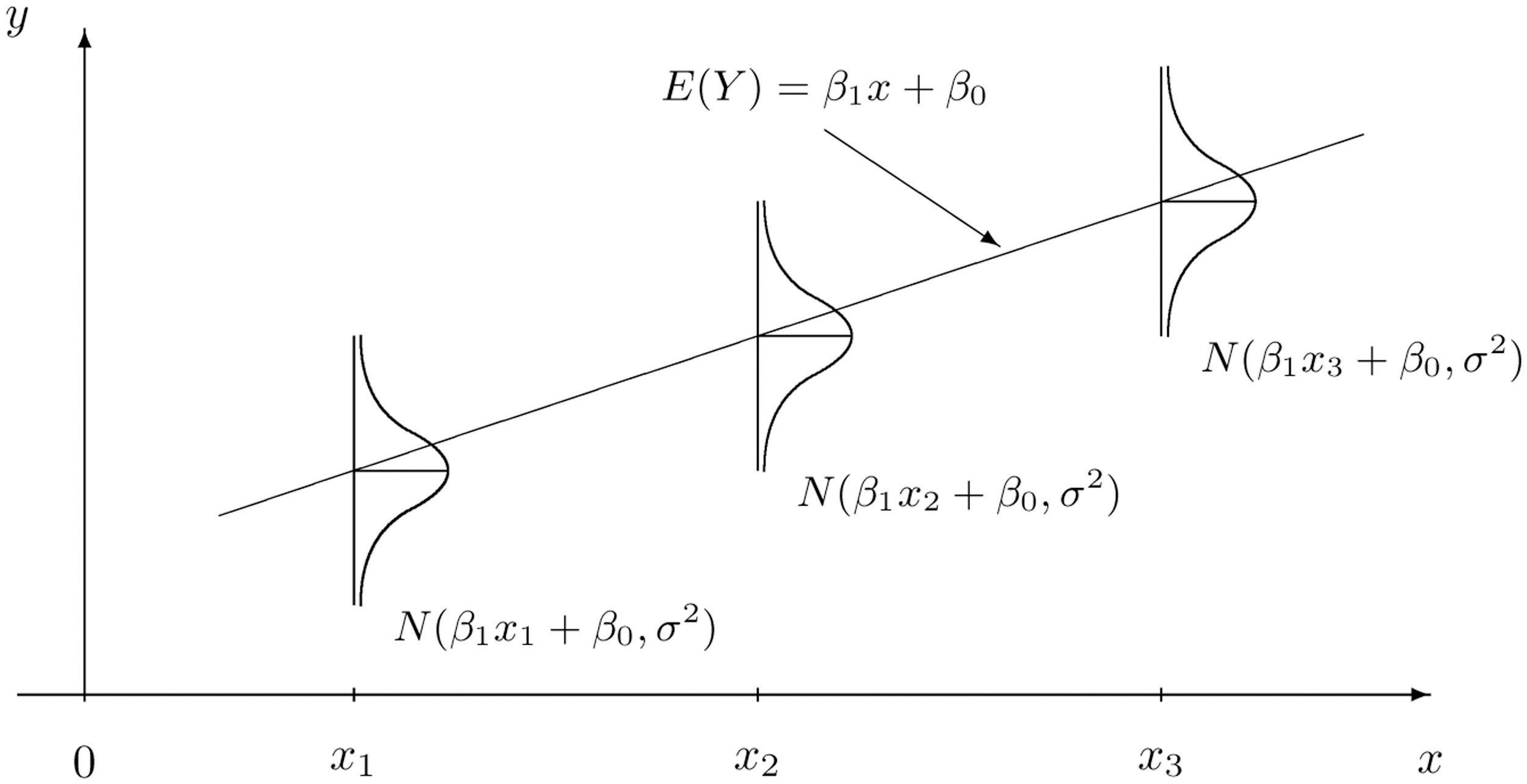

Modeling the mean response

- Assume that \(Y \sim N\left(\mu, \sigma^2\right)\), where

\[\mu = \mu\left(x\right) = \beta_0 + \beta_1 x = E\left(Y|x\right)\]

- In other words: \(Y \sim N\left(\beta_0 + \beta_1 x, \sigma^2\right)\)

- Alternatively, we could write \(Y = \beta_0 + \beta_1 x + \epsilon\), where \(\epsilon \sim N\left(0, \sigma^2\right)\)

- This is called the simple linear regression (SLR) model

The idea behind SLR

Arsenic experiment example

Is linear regression reasonable here?

Is linear regression reasonable here?

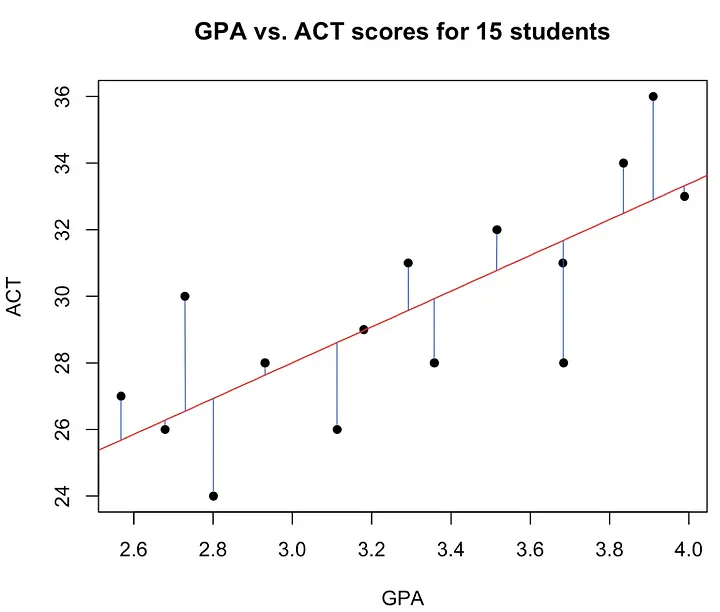

Least squares (LS) estimation

Idea of LS is to find \(\beta_0\) and \(\beta_1\) so that the sum of squared residuals (i.e., errors) is minimized: \[SSE = \sum_{i=1}^n\left(y_i - \beta_0 - \beta_1x_i\right)^2\]

Pretty straightforward optimization problem that leads to closed-form solution (but no point in memorizing the formulas!)

Concept of LS estimation

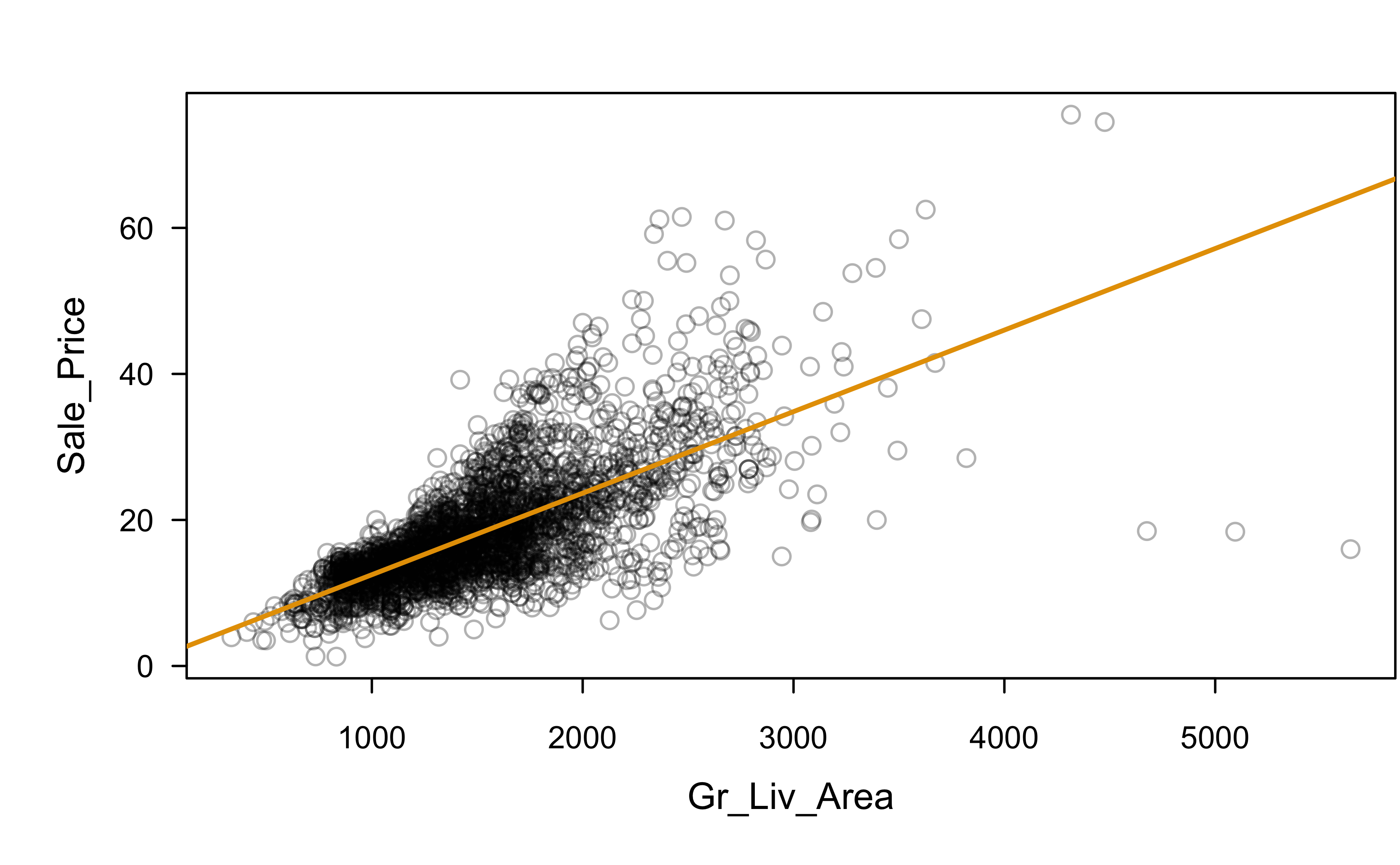

Sale_Price and Gr_Liv_Area

SLR fit

Call:

lm(formula = Sale_Price ~ Gr_Liv_Area, data = ames)

Residuals:

Min 1Q Median 3Q Max

-48.347 -3.022 -0.197 2.273 33.432

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 1.3289634 0.3269703 4.064 4.94e-05 ***

Gr_Liv_Area 0.0111694 0.0002066 54.061 < 2e-16 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 5.652 on 2928 degrees of freedom

Multiple R-squared: 0.4995, Adjusted R-squared: 0.4994

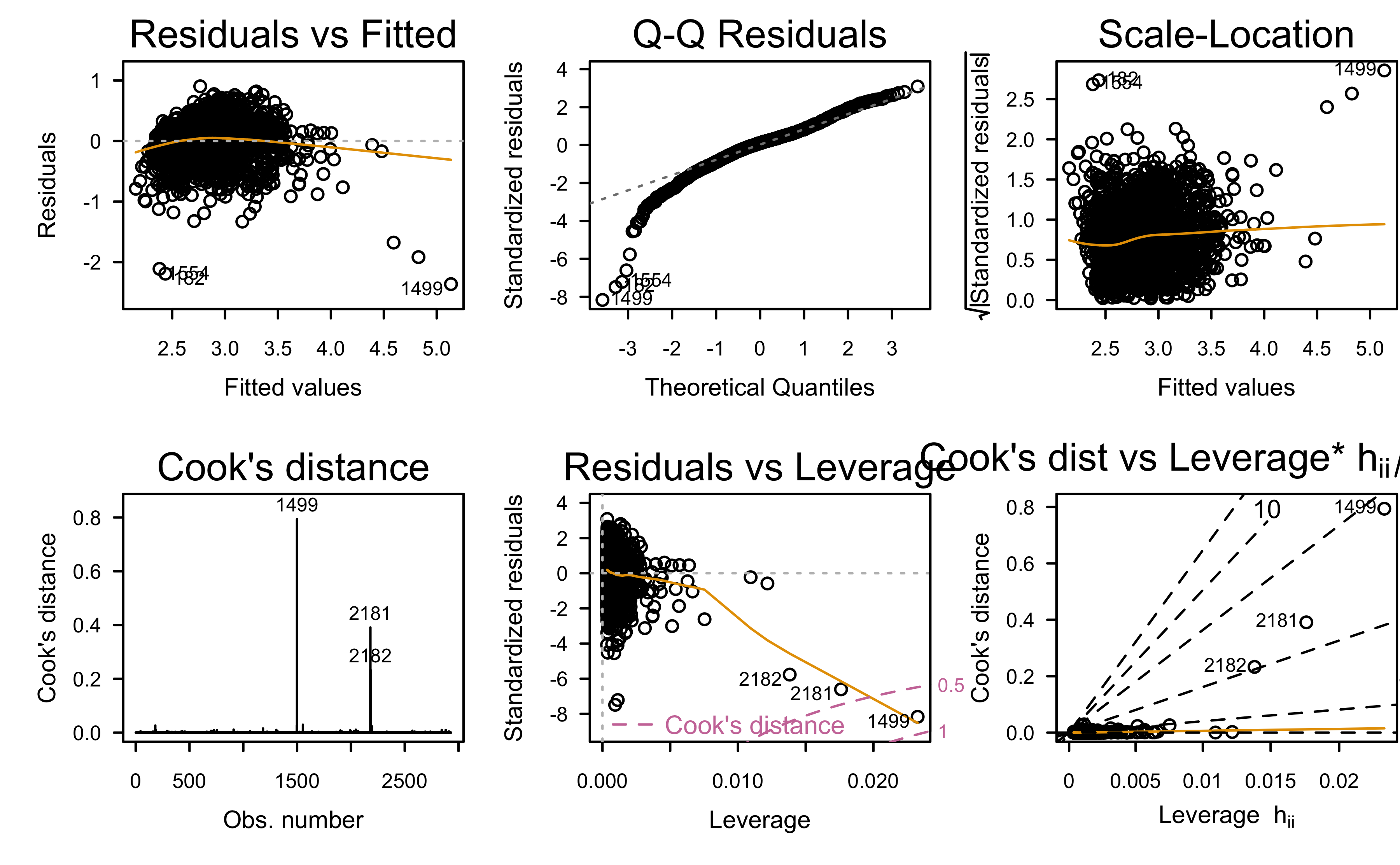

F-statistic: 2923 on 1 and 2928 DF, p-value: < 2.2e-16Is this a good fit?

Which assumptions seem violated to some degree?

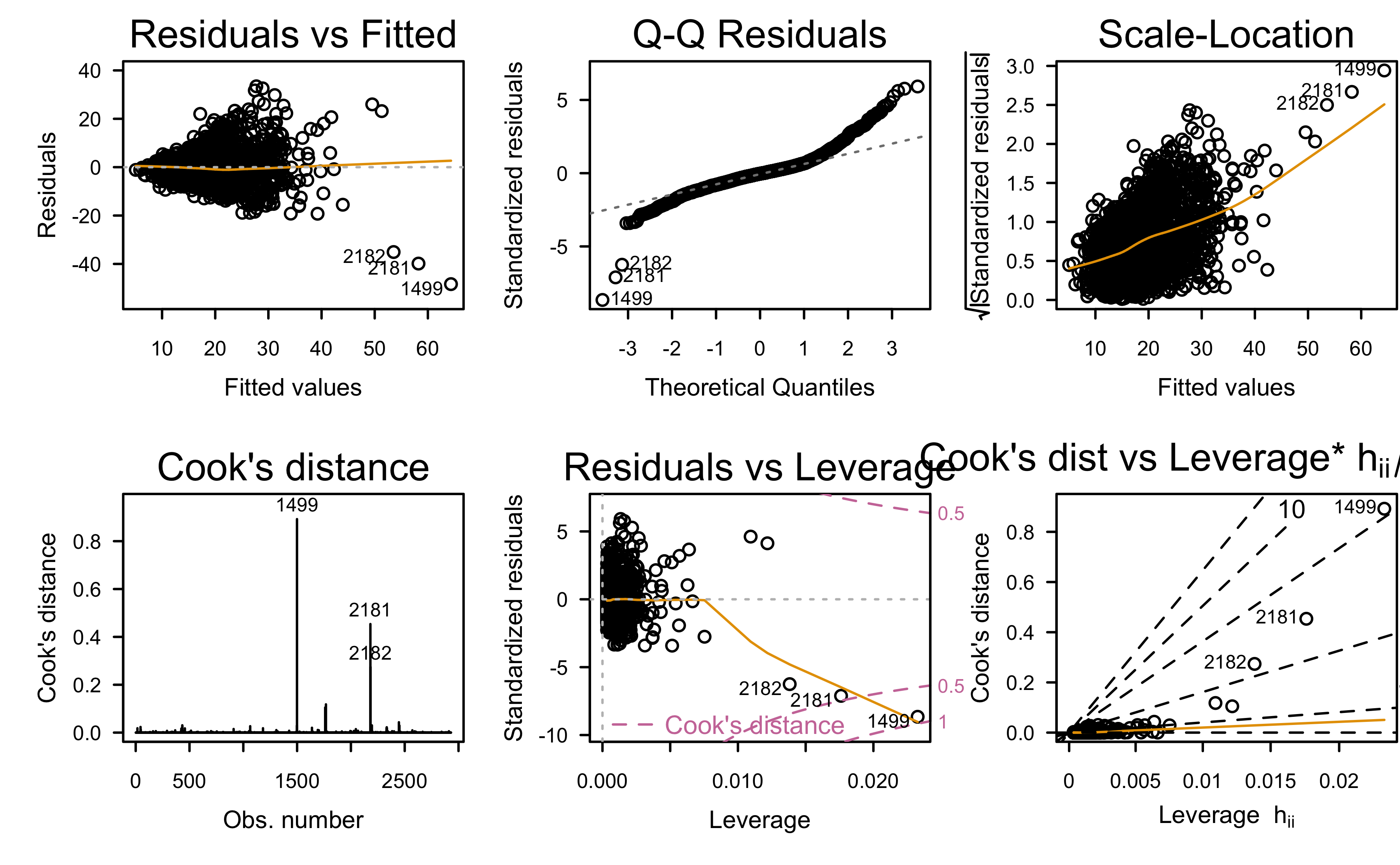

Residual diagnostics

- The standard residual is defined as \(e_i = y_i - \hat{y}_i\) and can be regarded as the observed error

- The residuals hold a lot of properties that make them useful for diagnosing potential issues with the model (e.g., suggesting potential transformations to try)

- Many other kinds of residuals exist for different purposes (e.g., standardized, studentized, jackknife or PRESS residuals, etc.)

Properties of the residuals

\(\sum_{i=1}^n e_i = 0\)

\(\sum_{i=1}^n e_i^2\) is a minimum

\(\sum_{i=1}^n X_ie_i = 0\)

\(\sum_{i=1}^n \hat{Y}_ie_i = 0\)

The LS regression line passes through the point \(\left(\bar{X}, \bar{Y}\right)\) (i.e., the center of the training data)

What can residual plots tell us?

Residuals vs. predictor values (checking non-linearity).

Residuals vs. fitted values (non-constant variance, non-linearity, and outliers)

Residuals vs. time or another sequence (checking independence)

Residuals vs. omitted predictor values (missing potentially important predictors)

Normal QQ plot of residuals (non-normality).

And much, much more!

Sale_Price ~ Gr_Liv_Area

Residual analysis:

What assumptions appear to be in violation?

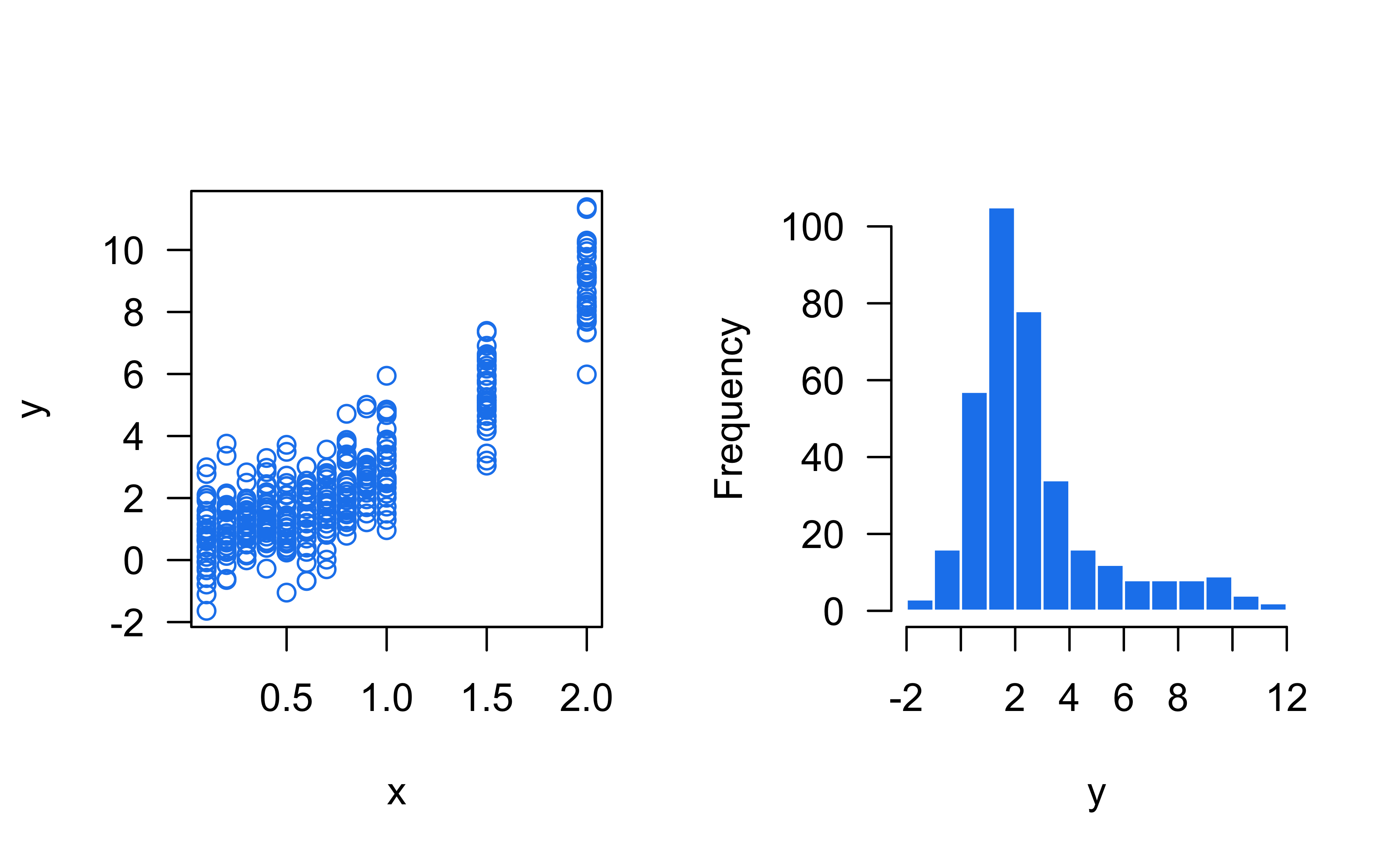

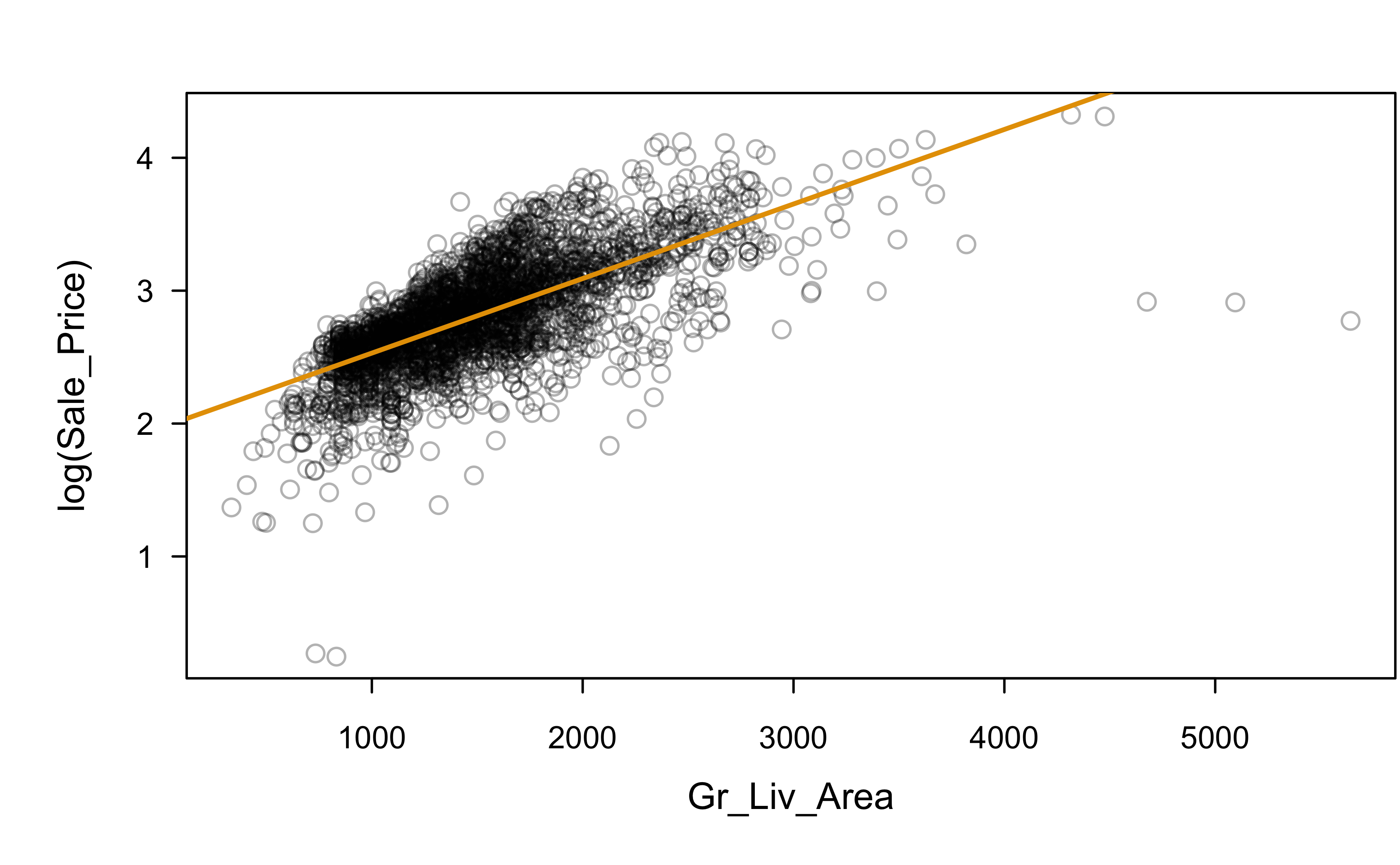

Let’s try a log transformation

Let’s try a log transformation

Call:

lm(formula = log(Sale_Price) ~ Gr_Liv_Area, data = ames)

Residuals:

Min 1Q Median 3Q Max

-2.36215 -0.15145 0.03091 0.16583 0.90332

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 1.9692014 0.0169355 116.28 <2e-16 ***

Gr_Liv_Area 0.0005611 0.0000107 52.43 <2e-16 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 0.2928 on 2928 degrees of freedom

Multiple R-squared: 0.4842, Adjusted R-squared: 0.484

F-statistic: 2749 on 1 and 2928 DF, p-value: < 2.2e-16log(Sale_Price) ~ Gr_Liv_Area

Residual analysis:

Any better?

Multiple Linear Regression (MLR)

MLR in a nutshell 🥜

The (normal) multiple linear regression model assumes \(Y \sim N\left(\mu\left(\boldsymbol{x}\right), \sigma^2\right)\), where \[\mu\left(\boldsymbol{x}\right) = \beta_0 + \sum_{i=1}^p \beta_i x_i = \boldsymbol{x}^\top\boldsymbol{\beta}\]

LS estimation still provides unbiased estimate of \(\boldsymbol{\beta} = \left(\beta_0, \beta_1, \dots, \beta_p\right)^\top\): \(\hat{\boldsymbol{\beta}} = \left(\boldsymbol{X}^\top\boldsymbol{X}\right)^{-1}\boldsymbol{X}^\top\boldsymbol{y}\)

Fitted values: \(\hat{\boldsymbol{y}} = \boldsymbol{X}\left(\boldsymbol{X}^\top\boldsymbol{X}\right)^{-1}\boldsymbol{X}^\top\boldsymbol{y} = \boldsymbol{H}\boldsymbol{y}\)

\(\boldsymbol{H}\) is the well-known “hat matrix”

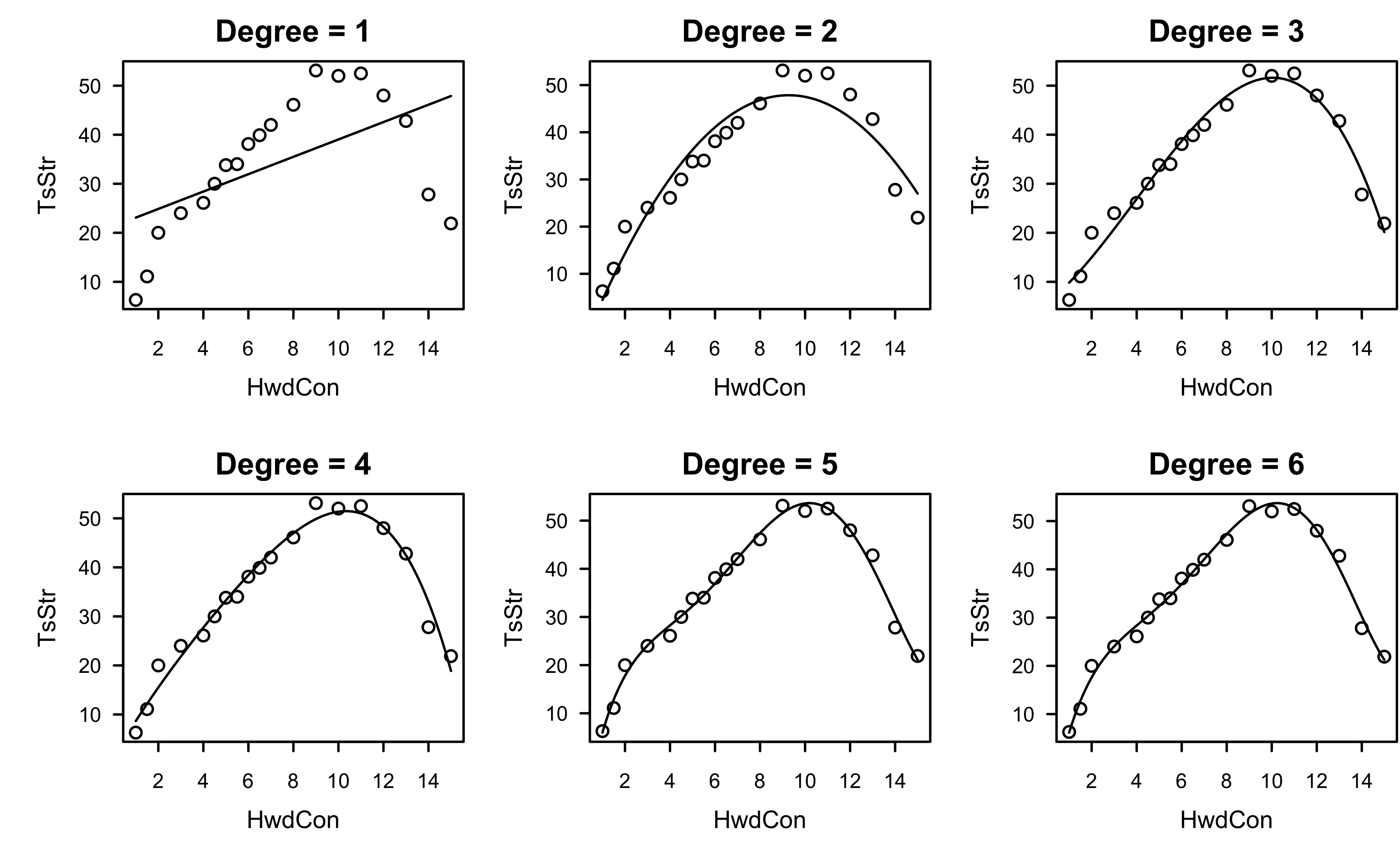

Polynomial regression

Polynomial regression is just a special case of the MLR model

A second order model in a single predictor: \[Y = \beta_0 + \beta_1 X + \beta_2 X^2 + \epsilon\]

A k-th order model in a single predictor (Typically \(k \le 3\)): \[Y = \beta_0 + \sum_{j=1}^k\beta_j X^j + \epsilon\]

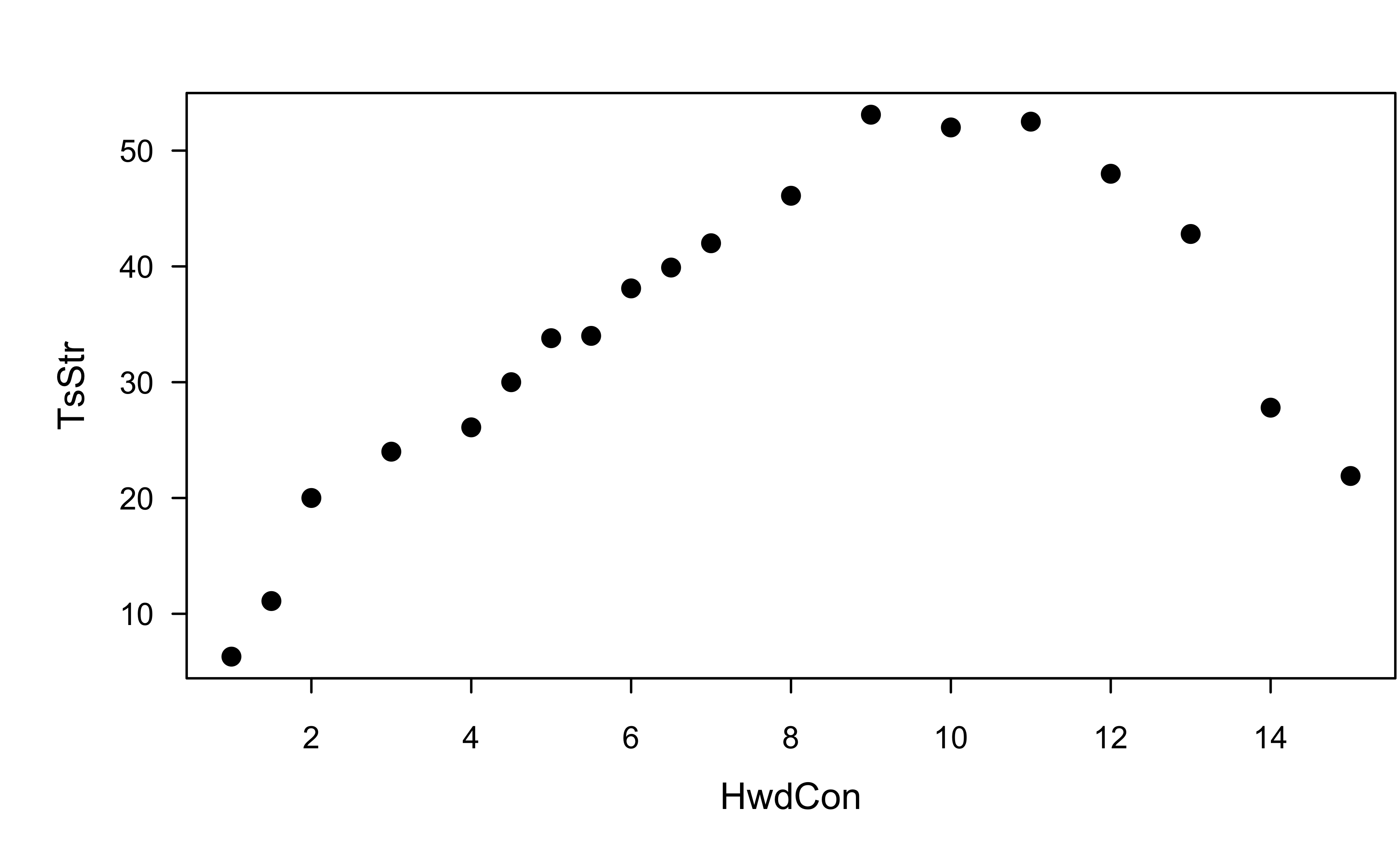

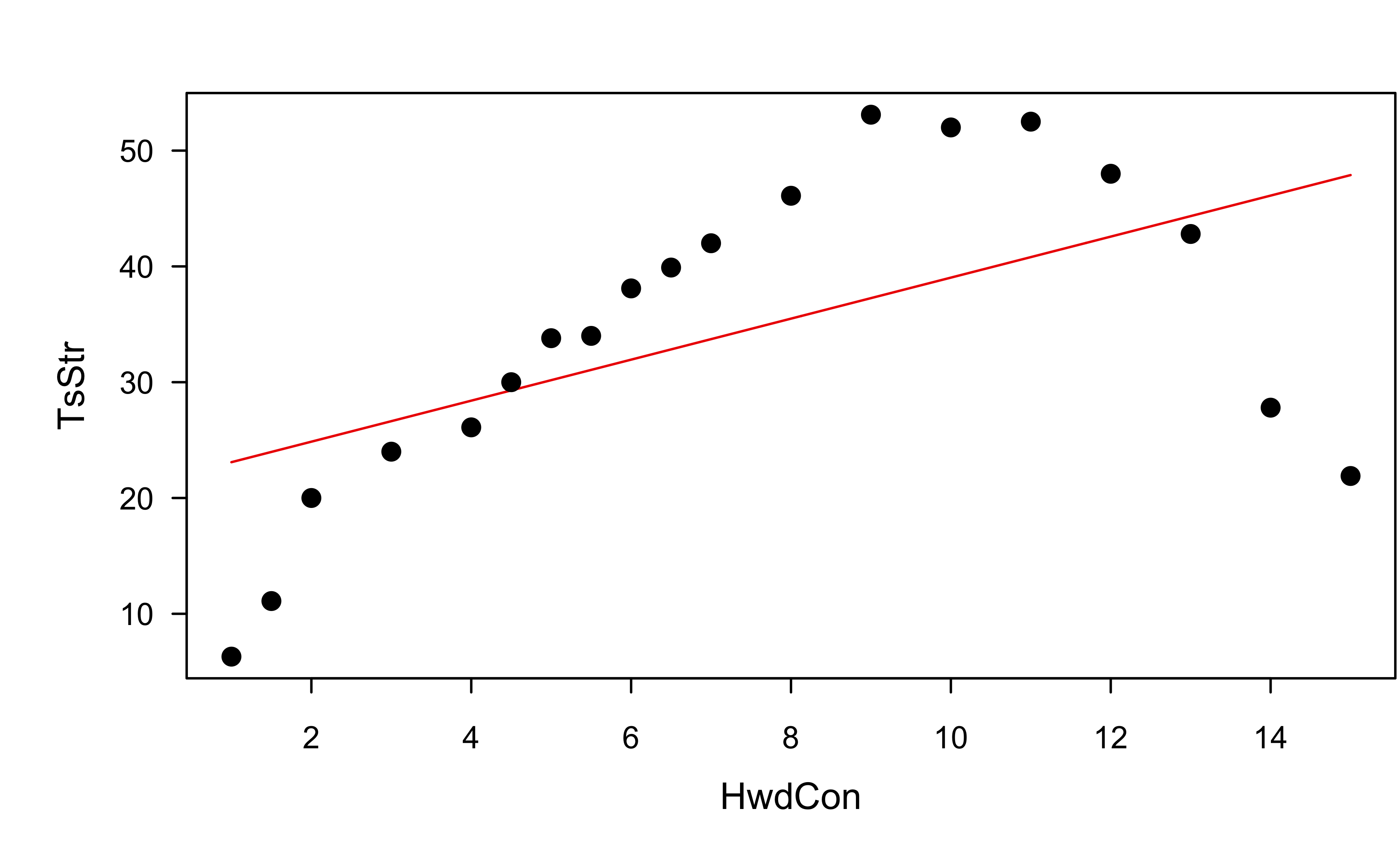

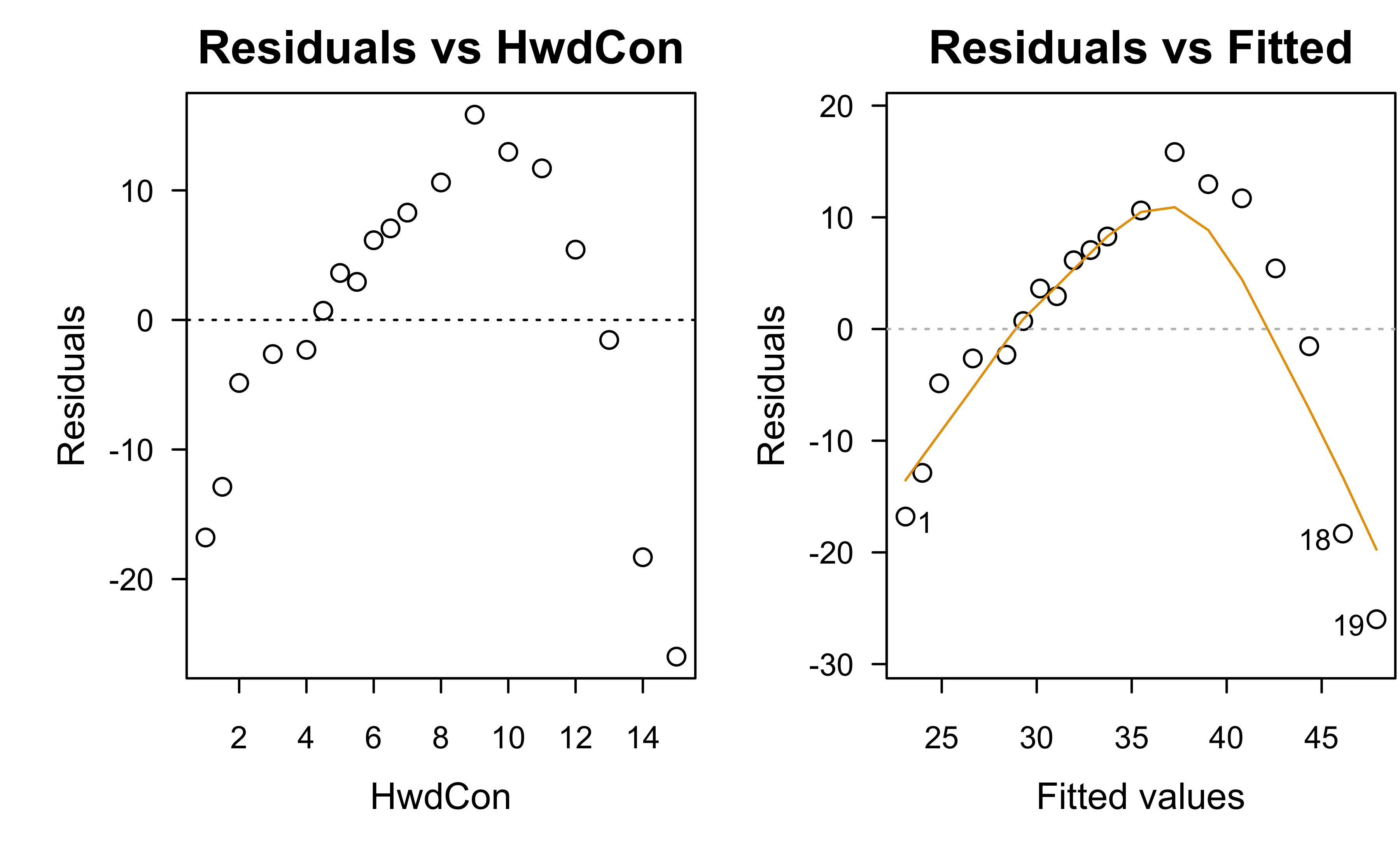

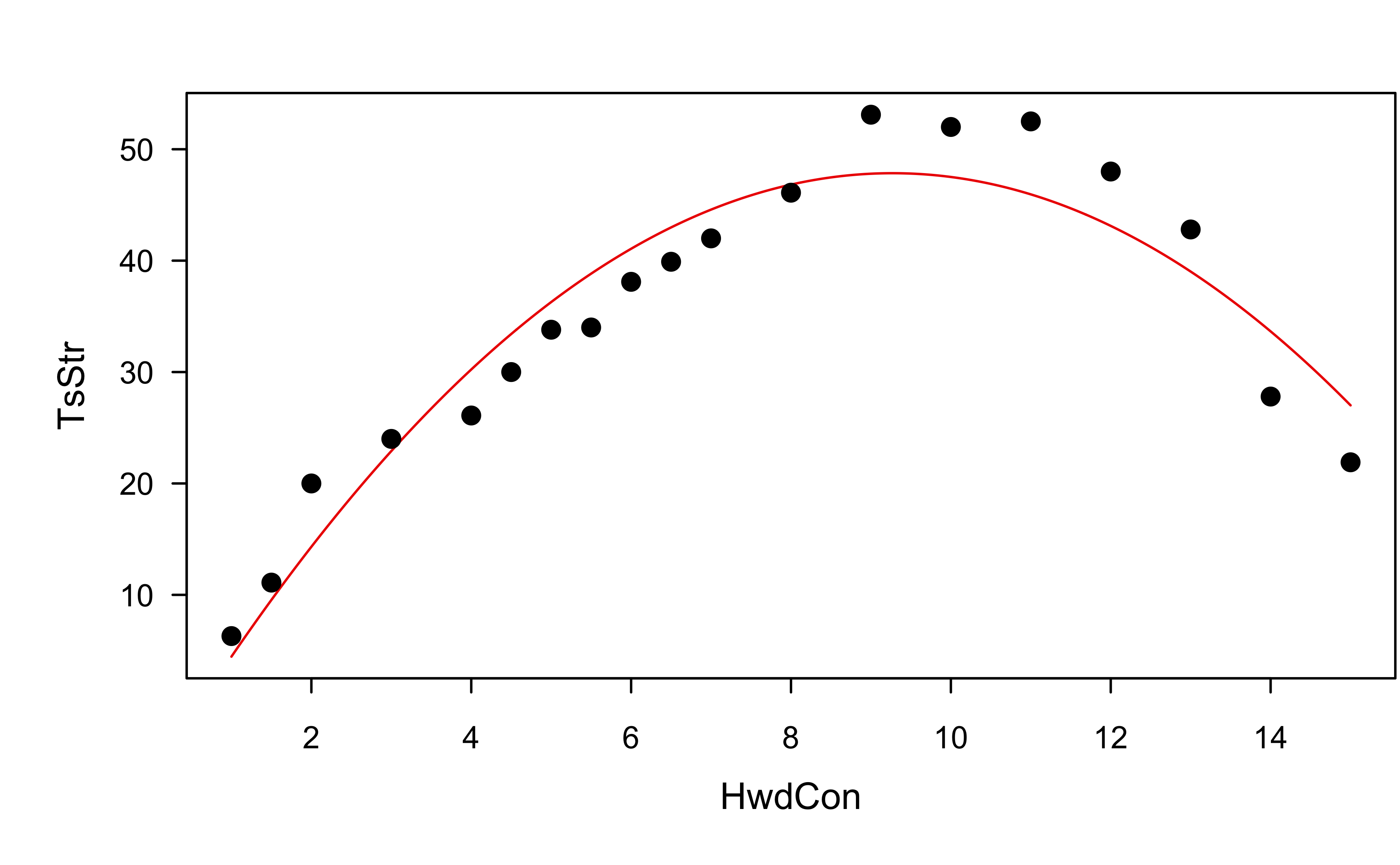

Example: paper strength data

Data concerning the strength of kraft paper and the percentage of hardwood in the batch of pulp from which the paper was produced.

Example: paper strength data

Example: paper strength data

Example: paper strength data

Show R code

Example: paper strength data

Example: paper strength data

Example: paper strength data

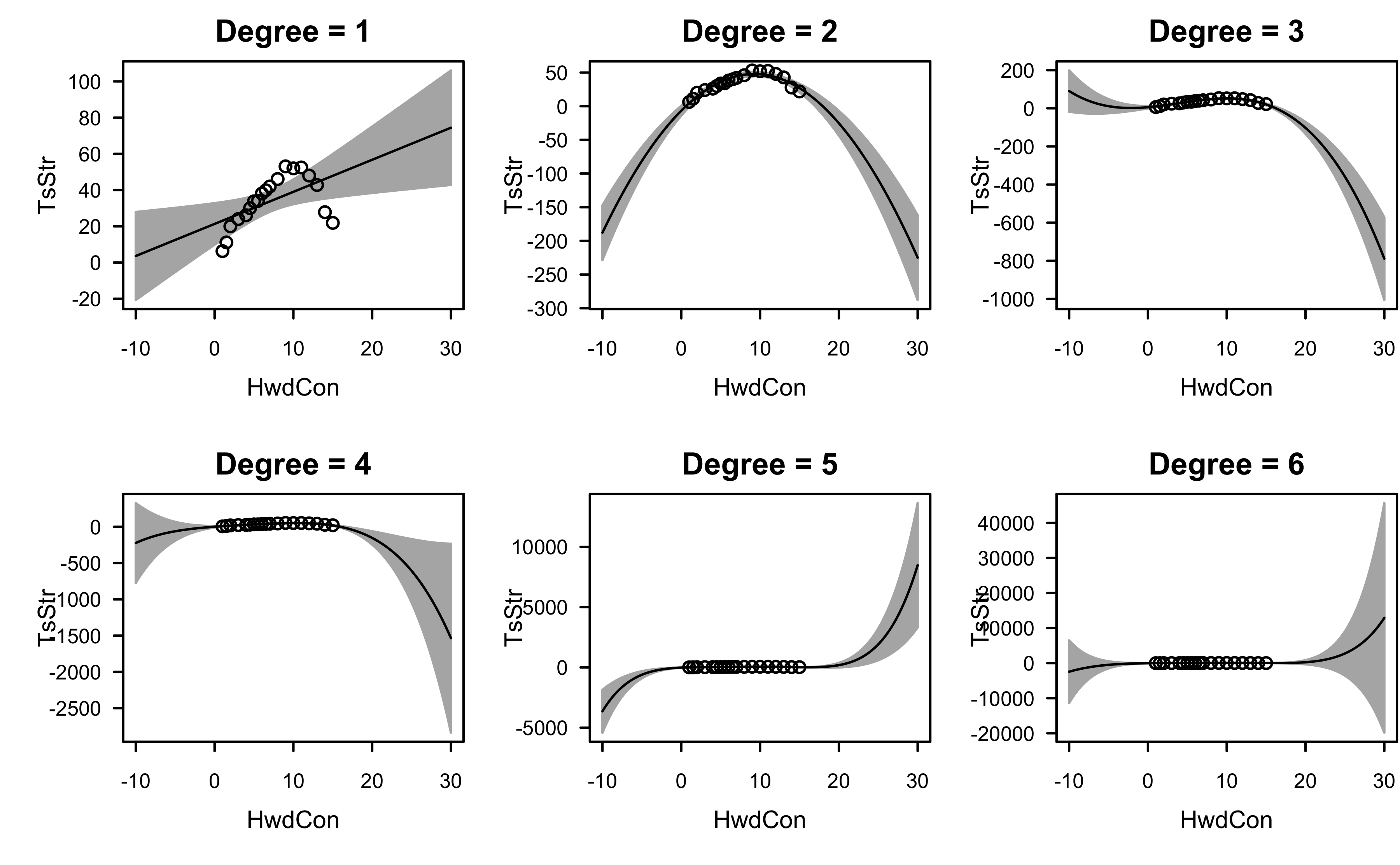

Polynomial regression

Some cautions ⚠️

Keep the order of the model as low as possible

Avoid interpolating the data or over fitting

Use the simplest model possible to explain the data, but no simpler (parsimony)

An \(n - 1\) order model can perfectly fit a data set with \(n\) observations (Why is this bad 🤔)

Polynomial regression

Two model-building strategies:

Fit the lowest order polynomial possible and build up (forward selection)

Fit the highest order polynomial of interest, and remove terms one at a time (backward elimination)

These two procedures may not result in the same final model

Increasing the order can result in an ill-conditioned \(\boldsymbol{X}^\top\boldsymbol{X}\) and multicollinearity

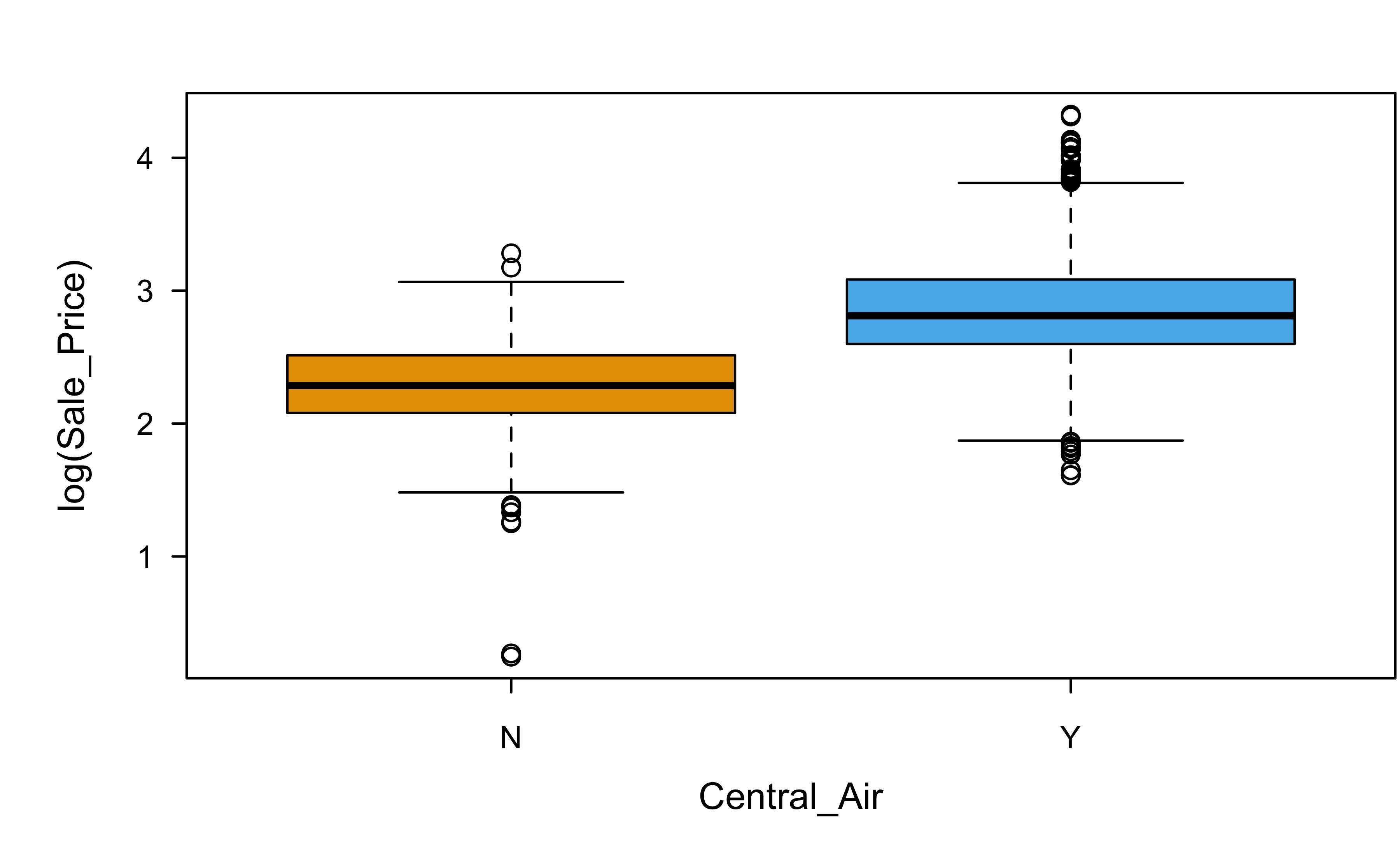

Categorical variables

Categorical variables can be handled in a number of ways in linear models, including

- Dummy encoding (nominal)

- Orthogonal polynomials (ordinal)

Categorical variables

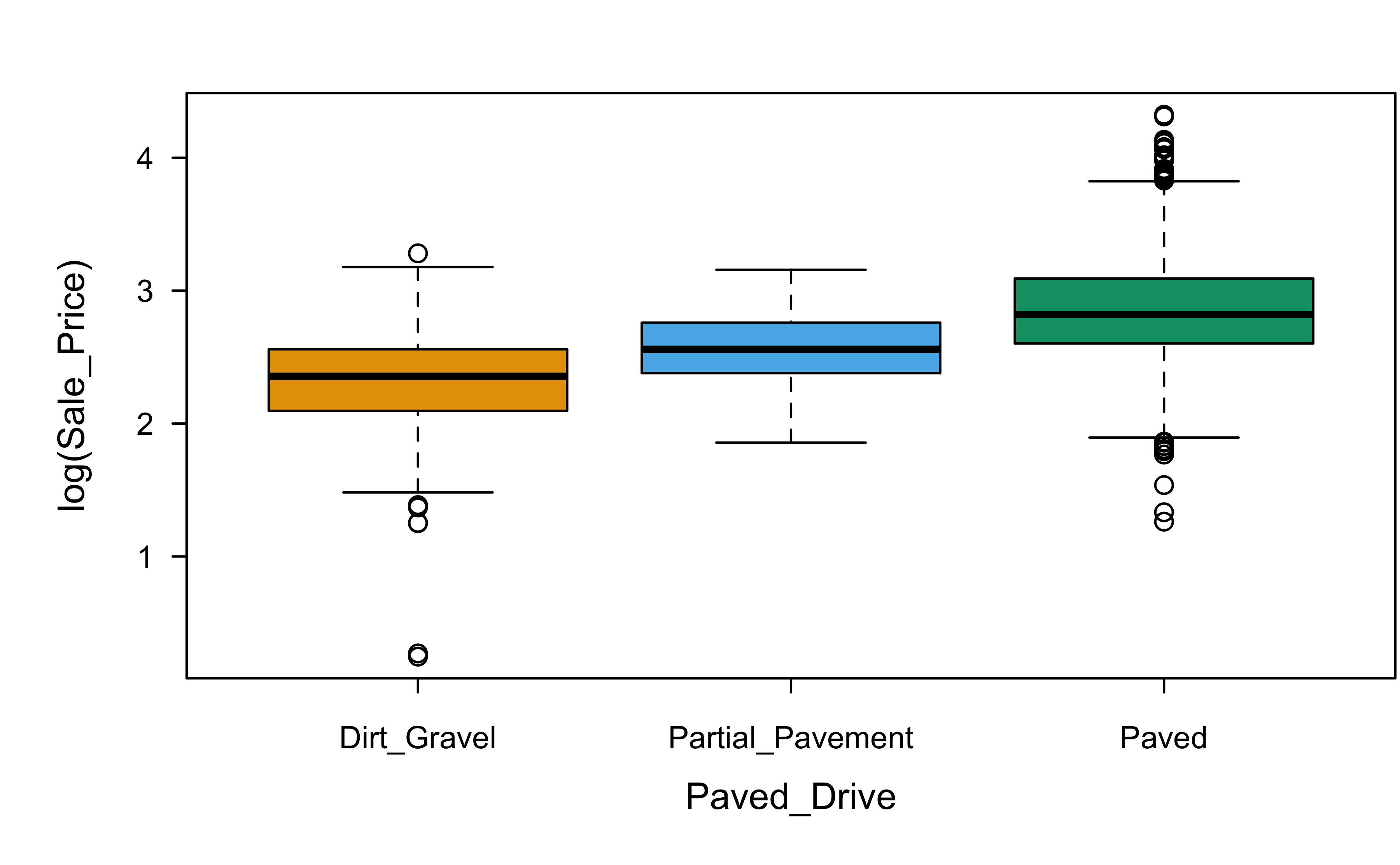

Let’s look at two (nominal) categorical variables:

Categorical variables

Categorical variables

If one of these homes downgraded from a paved driveway to a gravel driveway, would that cause the sale price to decrease? (Think very carefully here!)

Categorical variables

R dummy encodes nominal factors by default:

Show R code

Call:

lm(formula = log(Sale_Price) ~ Gr_Liv_Area + Central_Air + Paved_Drive,

data = ames)

Residuals:

Min 1Q Median 3Q Max

-2.2344 -0.1345 0.0073 0.1453 0.8502

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 1.428e+00 2.418e-02 59.059 < 2e-16 ***

Gr_Liv_Area 5.183e-04 9.520e-06 54.440 < 2e-16 ***

Central_AirY 3.464e-01 2.068e-02 16.747 < 2e-16 ***

Paved_DrivePartial_Pavement 1.334e-01 3.733e-02 3.574 0.000358 ***

Paved_DrivePaved 3.085e-01 1.975e-02 15.618 < 2e-16 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 0.2574 on 2925 degrees of freedom

Multiple R-squared: 0.6018, Adjusted R-squared: 0.6013

F-statistic: 1105 on 4 and 2925 DF, p-value: < 2.2e-16How do you interpret the coefficients here?

Coefficient of determination

The coefficient of determination is the proportion of the variance in the dependent variable that is predictable from the independent variables in the model.

R-squared (\(R^2\))

\(R^2 = \frac{SSR}{SST} = 1 - \frac{SSE}{SST}\)

\(R^2\) will always increase as more terms are added to the model!

Adjusted R-squared (\(R_{adj}^2\))

\(R_{adj}^2 = 1 - \frac{MSE}{SST/\left(n - 1\right)}\)

Penalizes \(R^2\) if there are “too many” terms in the model

\(R_{adj}^2\) and \(MSE\) provide equivalent information

Variable/model selection

- Variable/model selection is a very noisy problem! (Often best to avoid, if feasible)

- Ask the domain experts about important variables (don’t just rely on algorithms)

- P(selecting the “right” variables) = 0 (source)

- “All

modelssubsets of variables are wrong, but some are useful!” - In regression settings, regularization (e.g., ridge regression and the LASSO) is often more useful! (Think about the impact of multicollinearity on variable selection)

Data splitting

If prediction is the goal (e.g., compared to inference and hypothesis testing), the model performance should be assessed rigorously

Data splitting techniques ae key and the type of data splitting to use often depends on the situation (e.g., cross-sectional vs. time-series data)

In simplest terms, random split the data into two parts: A and B. Build a model on part A and see how well it does with predicting the response in part B.

Leakage is a huge concern here, so data splitting ALWAYS has to be done carefully!

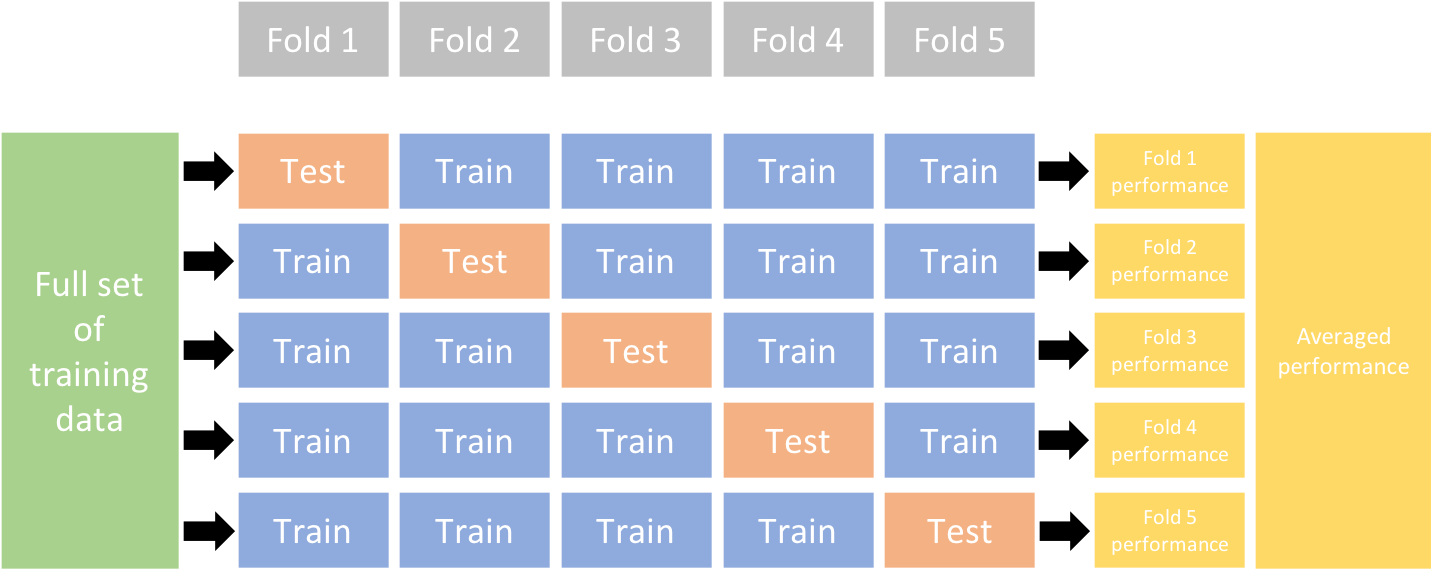

Data splitting: \(k\)-fold cross-validation

The PRESS statistic in linear regression is a special case (\(k = n\)) we get for free!

Questions?

BANA 7042: Statistical Modeling